Free JavaScript SEO Audit Tool for Developers: Automate SEO in 2026

April 6, 2026

If you’ve ever worried your website isn’t ranking as it should, a free SEO audit tool can feel like a secret weapon. But for many developers, SEO feels off-limits, not because it’s hard, but because it seems like someone else’s job, the marketing team, an agency, or a tool we can’t touch from the command line.

At CyberCraft Bangladesh, I’ve seen this challenge firsthand. That’s why I recommend developer-first SEO tools that integrate seamlessly with your workflow.

For years, I struggled with this myself, until I discovered @power-seo/audit while trying to catch SEO regressions in a Next.js project before they hit production. It wasn’t just a utility; it completely changed how I think about SEO audits.

In this article, I’ll show exactly what the library does, how to use it, and why it should be part of your project today. Plus, I’ll explain how it fits perfectly into your SEO checklist, helping ensure nothing slips through the cracks when it comes to search optimization.

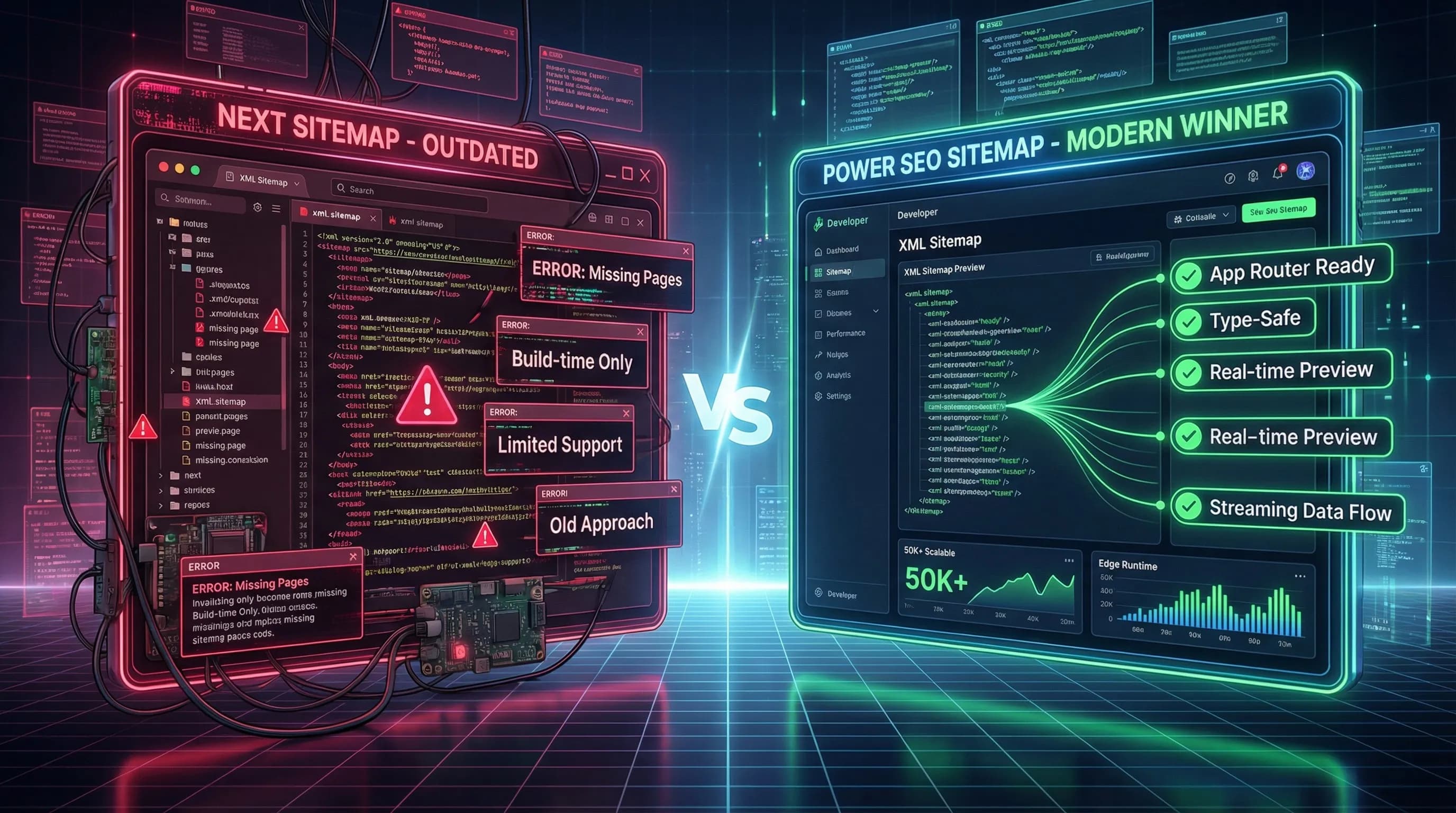

Why Developer SEO Workflows Are Still Broken

Picture this scenario. Your team ships a major content update. Three days later, someone forwards you a report from a paid SEO Audit Report Tool showing that half your pages have missing Open Graph images and a broken canonical tag. The fix takes ten minutes. But nobody caught it before it went live.

That is the gap. There is no automated technical SEO check sitting between your code and your production environment. Nothing enforces a score threshold. Nothing surfaces issues at build time the way ESLint catches bad code or TypeScript catches type mismatches.

Tools like Screaming Frog require you to run a desktop application. Ahrefs Site Audit is powerful but lives entirely behind a dashboard and a paid subscription. Google Lighthouse helps with performance but was not built for programmatic, multi-page SEO scoring in a pipeline.

What developers actually need is something that speaks their language. A package. A function. Typed inputs and typed outputs. Something that runs anywhere Node.js runs and does not need to phone home to do its job. @power-seo/audit is built for exactly that use case.

What the Library Actually Does

At its core, @power-seo/audit takes structured page data as input and returns a scored audit report as output. No crawling. No browser. No waiting.

You pass in what you already know about a page, like the title, meta description, headings, images, word count, and internal links, and @power-seo runs four separate rule sets against that data. It then hands back a score between 0 and 100, a breakdown by category, and a flat list of every issue it found.

Each issue carries a severity tag. Either error, warning, info, or pass. This means you can filter and prioritize programmatically instead of reading through a wall of text in a dashboard.

The four rule categories are:

- Meta rules - title, description, canonical, robots, Open Graph

- Content rules - word count, keyphrase density, readability

- Structure rules - heading hierarchy, image alt text, links, JSON-LD

- Performance rules - image formats, resource hints, third-party scripts

Because everything runs locally with zero network calls, you can use this in Next.js server components, Remix loaders, Express endpoints, Cloudflare Workers, Vercel Edge Functions, or a plain Node.js script. The environment does not matter. If TypeScript runs there, so does this library.

Getting Started in Under a Minute

Installation is a single command:

npm install @power-seo/audit

# or

yarn add @power-seo/audit

# or

pnpm add @power-seo/audit

No configuration file. No .env entry. No account to create. You install it, and it works.

Running Your First Page Audit

Below is a quick-start example straight from the official documentation. Each field in the input object maps to something the rule engine checks:

import { auditPage } from '@power-seo/audit';

const result = auditPage({

url: 'https://example.com/blog/react-seo-guide',

title: 'React SEO Guide - Best Practices for 2026',

metaDescription:

'Learn how to optimize React applications for search engines with meta tags, structured data, and Core Web Vitals improvements.',

canonical: 'https://example.com/blog/react-seo-guide',

robots: 'index, follow',

content: '<h1>React SEO Guide</h1><p>Search engine optimization for React apps...</p>',

headings: ['h1:React SEO Guide', 'h2:Why SEO Matters for React', 'h2:Meta Tags in React'],

images: [{ src: '/hero.webp', alt: 'React SEO guide illustration' }],

internalLinks: ['/blog', '/docs/meta-tags'],

externalLinks: ['https://developers.google.com/search'],

focusKeyphrase: 'react seo',

wordCount: 1850,

});

console.log(result.score); // e.g. 84

console.log(result.categories);

// { meta: { score: 90, passed: 9, warnings: 1, errors: 0 }, content: { score: 82, ... }, ... }

console.log(result.rules);

// [

// { id: 'meta-description-length', category: 'meta', severity: 'warning', title: '...', description: '...' },

// { id: 'content-word-count', category: 'content', severity: 'pass', ... },

// ]The key insight here is how the input is structured. You are not handing it a URL and waiting for a spider to crawl the page. You are feeding it data you already have. That matters because it means you can call auditPage from inside a CMS publish hook, a Next.js API route, a build script, or a server action. Wherever that data lives, the audit can run.

The response gives you three things right away:

- result.score - the overall 0-100 score

- result.categories - per-category breakdowns with score, passed, warnings, errors

- result.rules - the full flat array of every rule result, sorted by what the engine ran

Audit a Real Product Page with SEO Audit Tool

Here is a more grounded example using a typed input object. This pattern is what you would actually use inside a TypeScript project:

import { auditPage } from '@power-seo/audit';

import type { PageAuditInput, PageAuditResult } from '@power-seo/audit';

const input: PageAuditInput = {

url: 'https://example.com/products/widget',

title: 'Premium Widget - Buy Online | Example',

metaDescription:

'Buy our premium widget online. Free shipping on orders over $50. Trusted by 10,000+ customers.',

canonical: 'https://example.com/products/widget',

robots: 'index, follow',

openGraph: {

title: 'Premium Widget',

description: 'Buy our premium widget with free shipping.',

image: 'https://example.com/images/widget-og.jpg',

},

content: '<h1>Premium Widget</h1><p>Our best-selling product...</p>',

headings: ['h1:Premium Widget', 'h2:Features', 'h2:Customer Reviews'],

images: [

{ src: '/images/widget.jpg', alt: 'Premium widget product photo' },

{ src: '/images/widget-detail.jpg', alt: '' },

],

internalLinks: ['/products', '/cart', '/about'],

externalLinks: [],

focusKeyphrase: 'premium widget',

wordCount: 620,

};

const result: PageAuditResult = auditPage(input);

// Filter rules by severity

const errors = result.rules.filter((r) => r.severity === 'error');

const warnings = result.rules.filter((r) => r.severity === 'warning');

console.log(`Score: ${result.score}/100`);

console.log(`Errors: ${errors.length}, Warnings: ${warnings.length}`);Notice the second image entry. The alt value is an empty string. The structure rule engine will flag that as an issue. It comes back in the rules array with a severity attached. You see it before the page ever ships. That is the kind of catch that previously only happened after the fact, inside a PDF from an external reporting platform. Using an AI SEO tool, these accessibility and SEO issues are identified instantly, improving both site performance and search ranking.

Scaling Up: Auditing Entire Site

Single-page audits solve a lot. But for teams managing content at scale, AuditSite is where the real leverage comes from. You pass in an array of page inputs and get back an aggregated report with a mean score, a list of the most common issues across all pages, and individual results for each URL:

import { auditSite } from '@power-seo/audit';

import type { SiteAuditInput, SiteAuditResult } from '@power-seo/audit';

const siteInput: SiteAuditInput = {

pages: [page1Input, page2Input, page3Input],

};

const report: SiteAuditResult = auditSite(siteInput);

console.log(`Average score: ${report.score}/100`);

console.log(`Pages audited: ${report.totalPages}`);

console.log('Top issues across site:');

report.topIssues.forEach(({ id, title, severity }) => {

console.log(` ${id} (${title}) [${severity}]`);

});

// Access individual page results

report.pageResults.forEach(({ url, score, rules }) => {

const issues = rules.filter((r) => r.severity === 'error' || r.severity === 'warning');

console.log(`${url}: ${score}/100 (${issues.length} issues)`);

});The topIssues field is genuinely useful in practice. When one rule keeps failing across dozens of pages, it surfaces at the top of that list. Fix it once, and the site-wide score jumps. This is the kind of insight that used to require a full agency audit or a pricey SaaS subscription. Now it is just a function call.

Picking Only the Rules You Need

Not every situation calls for a full audit. Maybe you only want to validate meta tags before a CMS publish. Maybe you are building a writing assistant that only cares about content quality. The library exposes each rule set as an independent function:

import {

runMetaRules,

runContentRules,

runStructureRules,

runPerformanceRules,

} from '@power-seo/audit';

import type { PageAuditInput, CategoryResult } from '@power-seo/audit';

const input: PageAuditInput = {

/* ... */

};

// Run only meta checks - title, description, canonical, robots, OG

const metaRules = runMetaRules(input);

const errors = metaRules.filter((r) => r.severity === 'error');

const passes = metaRules.filter((r) => r.severity === 'pass');

console.log(`Meta: ${passes.length} passed, ${errors.length} errors`);

metaRules.forEach((r) => console.log(` [${r.severity}] ${r.title}: ${r.description}`));

// Run only content checks - word count, keyphrase, readability

const contentRules = runContentRules(input);

// Run only structure checks - headings, images, links, schema

const structureRules = runStructureRules(input);

// Run only performance checks - image formats, resource hints

const perfRules = runPerformanceRules(input);Because the package ships with "sideEffects": false and named exports per rule runner, bundlers can tree-shake out whatever you do not import. You pay only for what you use, both in bundle size and in compute cost.

Sorting and Grouping Issues for a Dashboard

If you are building an admin interface or an internal SEO reporting tool, you will want to present issues in a useful order. Using the grouping and sorting pattern from the official docs ensures your SEO Audit tool displays issues clearly and efficiently.:

import { auditPage } from '@power-seo/audit';

import type { AuditSeverity } from '@power-seo/audit';

const result = auditPage(input);

// Group rules by category

const byCategory = result.rules.reduce(

(acc, rule) => {

acc[rule.category] = acc[rule.category] ?? [];

acc[rule.category]!.push(rule);

return acc;

},

{} as Record<string, typeof result.rules>,

);

// Priority order: errors first, then warnings, then info, then pass

const prioritized = [...result.rules].sort((a, b) => {

const order: Record<AuditSeverity, number> = { error: 0, warning: 1, info: 2, pass: 3 };

return order[a.severity] - order[b.severity];

});

// Check if page passes a minimum score threshold

const MINIMUM_SCORE = 70;

if (result.score < MINIMUM_SCORE) {

throw new Error(`SEO audit failed: score ${result.score} is below minimum ${MINIMUM_SCORE}`);

}That final block is where things get interesting for engineering teams. Throwing an error when the score drops below a threshold is the bridge between this library and your deployment process.

Blocking Bad Deploys with a CI Quality Gate

This is the use case that most excites me as someone who works with engineering teams regularly. You can make SEO regressions a build failure. The same way a failing test blocks a merge, a low SEO score blocks a deploy:

// scripts/seo-audit.ts

import { auditSite } from '@power-seo/audit';

import { pages } from './test-pages.js';

const report = auditSite({ pages });

const SCORE_THRESHOLD = 75;

const ALLOWED_ERRORS = 0;

const totalErrors = report.pageResults.flatMap((p) =>

p.rules.filter((r) => r.severity === 'error'),

).length;

if (report.score < SCORE_THRESHOLD || totalErrors > ALLOWED_ERRORS) {

console.error(`SEO audit FAILED`);

console.error(` Average score: ${report.score} (min: ${SCORE_THRESHOLD})`);

console.error(` Critical errors: ${totalErrors} (max: ${ALLOWED_ERRORS})`);

process.exit(1);

}

console.log(`SEO audit PASSED - average score: ${report.score}/100`);Drop this script into your GitHub Actions workflow or Vercel build step. Set your thresholds. Now every pull request that would hurt your SEO health fails before it reaches production. That is what it truly means to automate technical SEO audits at the engineering level.

Full TypeScript API at a Glance

Every part of this library is typed. Here is the complete import surface:

import type {

AuditCategory, // 'meta' | 'content' | 'structure' | 'performance'

AuditSeverity, // 'error' | 'warning' | 'info' | 'pass'

AuditRule, // { id, category, title, description, severity }

PageAuditInput, // Full page input object (see auditPage parameters above)

PageAuditResult, // { url, score, categories, rules, recommendations }

CategoryResult, // { score: number; passed: number; warnings: number; errors: number }

SiteAuditInput, // { pages: PageAuditInput[] }

SiteAuditResult, // { score, totalPages, pageResults, topIssues, summary }

} from '@power-seo/audit';Working in VS Code with these types means autocomplete on every field. No more guessing what shape the response will be or what values a severity field accepts. The editor catches mistakes before the code even runs.

A Closer Look at Each Rule Category

I want to be specific about what the engine actually checks, because the phrase "SEO audit" gets used loosely across the industry.

Meta rules validate title presence and check that it falls between 50 and 60 characters. They verify meta description presence and length, which should sit between 120 and 158 characters. They confirm canonical tag formatting, inspect robots meta directives, and check Open Graph completeness across og:title, og:description, og:image, and og:url.

Content rules look at word count to catch thin pages, check that your focus keyphrase appears in the title, description, first paragraph, and headings, analyze keyphrase density, and calculate a readability score.

Structure rules confirm there is exactly one H1 on the page, verify that heading levels do not skip, check every image for a non-empty alt attribute, count internal and external links, and validate any JSON-LD schema objects present on the page.

Performance rules flag JPEG images where WebP or AVIF would perform better, catch images with missing width and height attributes, check for the presence of resource hints like preconnect and preload, and identify patterns common in poorly optimized third-party scripts.

Every single one of these connects to a real Google ranking signal. This is not a shallow checklist built for appearances.

Where This Fits in Real Projects

Here are the concrete integration points based on what the documentation describes.

Headless CMS integrations are a natural first target. Run the audit server-side before a page gets published. If the score is below your threshold, surface the issues to the editor right inside the CMS interface. No separate tool needed.

Next.js and Remix admin dashboards can expose per-route SEO scores to content teams. Run the audit in a server component or a loader and display category scores right next to the content editor.

CI/CD pipelines are covered by the script pattern above. One file. Two constants. One process.exit. Wire it into your existing workflow in under an hour.

SaaS platforms serving multiple clients can generate per-client SEO health scores across all managed pages. The structured output from auditSite is designed to feed directly into dashboards and reporting views.

Agency reporting tools can use the recommendations array on PageAuditResult to populate client-facing reports automatically. Every error and warning already includes a human-readable description out of the box.

A Quick Word on Package Security

Before adding anything to a production build pipeline, checking the supply chain is non-negotiable.

There are no install scripts. No postinstall or preinstall hooks run arbitrary code during npm install. The package never reaches out to an external server at runtime. There is no eval or dynamic code execution. Releases are published through a CI-signed workflow on GitHub, which means build provenance is verifiable.

For a library sitting inside your CI gate, that level of transparency is not a nice-to-have. It is a requirement.

My Take After Using It in Production

SEO tooling has historically been built for marketers and analysts. It shows in the design. Everything lives behind a login screen. Everything produces a PDF. Nothing composes with a build system or a server function.

@power-seo/audit approaches the problem differently. The output is structured data. The API is typed. Execution is synchronous and local. It slots into a developer's mental model instead of fighting against it.

Most people hear SEO audit tool and picture a website where you type a URL into a box. Developers should think about it differently. A tool should be a function you can call, test, threshold, and schedule. This library makes that possible without a monthly bill or a browser tab. It even works seamlessly with React developer tools, letting you inspect SEO audit data alongside your components.

If you have a Next.js or Remix project running today, install this, point it at your three most important pages, and read through the rules array. There is a strong chance you will find at least one issue you had no idea existed.

Frequently Asked Questions About SEO Audit Tool

1. What is @power-seo/audit?

A free Node.js library that audits SEO using structured page data and returns scores and issues.

2. Do I need an account?

No login, dashboard, or subscription required; it runs fully locally.

3. What types of SEO checks does it perform?

Meta tags, content, structure (headings, links, alt), and performance (images, scripts).

4. Can it be integrated with CI/CD pipelines?

Yes, you can block deploys if SEO scores drop below thresholds or critical errors exist.

5. Is it safe for production?

Yes, it’s fully local, no network calls, no scripts, and releases are CI-signed and verifiable.

FAQ

Frequently Asked Questions

We offer end-to-end digital solutions including website design & development, UI/UX design, SEO, custom ERP systems, graphics & brand identity, and digital marketing.

Timelines vary by project scope. A standard website typically takes 3-6 weeks, while complex ERP or web application projects may take 2-5 months.

Yes - we offer ongoing support and maintenance packages for all projects. Our team is available to handle updates, bug fixes, performance monitoring, and feature additions.

Absolutely. Visit our Works section to browse our portfolio of completed projects across various industries and service categories.

Simply reach out via our contact form or call us directly. We will schedule a free consultation to understand your needs and provide a tailored proposal.