SEO For Single Page Applications: Rank React & Next.js Apps Fast

April 8, 2026

SEO For Single Page Applications: Rank React & Next.js Apps Fast

I've been building React apps for years. And the one question I keep getting from developers is this: does SEO for single page applications actually work?

Short answer yes. But you need the right approach.

Let me walk you through what I've learned, what the real problems are, and how I'm solving them today with tools that actually fit the way we build apps in 2026.

What Is SEO for Single Page Applications

SEO for single page applications (SPAs) is the practice of making JavaScript-rendered web apps discoverable and rankable by search engines like Google. Unlike traditional server-rendered websites, SPAs load a minimal HTML shell and build the page content dynamically in the browser using JavaScript. Single page application search engine optimization addresses this architectural difference, creating a fundamental solution to the conflict between dynamic content and search engines expecting fully rendered HTML.

Getting single page application SEO right means solving that conflict at the infrastructure level, not the content level. Using a JavaScript SEO audit tool can help identify rendering issues, crawlability problems, and other obstacles that may prevent SPAs from ranking effectively.

Real Problem with SPAs and SEO

When you build a traditional website, the server sends a complete HTML page to the browser. Google's crawler reads it. Done.

Single page applications work differently. The server sends a mostly empty HTML shell. JavaScript runs. Then your content appears. By the time Googlebot visits, it might only see a blank <div id="root"></div>.

That's the core tension between single page application search engine optimization and the way React apps are built by default.

Here's what actually breaks:

- Missing meta tags: your <title> and <meta description> are often the same on every page

- No structured data: Google can't understand your content types

- JavaScript-rendered content: Googlebot may skip it or render it late

- No sitemap: crawlers don't know what pages exist

- Bad canonical URLs: duplicate content signals confuse rankings

These aren't just theoretical problems. They directly hurt your rankings.

Why SPA SEO Gets Harder at Scale

If you have 10 pages, you can manage this manually. If you have 10,000 pages, like a programmatic SEO site, a job board, or an e-commerce catalog, manual meta tags are impossible. That's where having a proper toolset matters, especially for seo for single page apps.

I've been using the @power-seo ecosystem for the past several months. It's a set of 17 independent TypeScript packages built specifically for this problem space. Each package handles one concern, meta tags, sitemaps, structured data, content analysis, auditing, and more. Let me show you how I actually use these in a real Next.js app.

Fix Meta Tags First

This is the most common failure point in SEO for web apps. Every page needs a unique <title> and <meta description>. Most SPAs ship with the same title on every route.

If you're on Next.js App Router, the @power-seo/meta package handles this cleanly.

import { createMetadata } from '@power-seo/meta';

export const metadata = createMetadata({

title: 'My Page',

description: 'A page about something great.',

canonical: 'https://example.com/my-page',

robots: { index: true, follow: true, maxSnippet: 150 },

openGraph: { type: 'website', images: [{ url: 'https://example.com/og.jpg' }] },

});One function call. You get a native Next.js Metadata object back, no manual mapping required.

For dynamic routes, use generateMetadata():

import { createMetadata } from '@power-seo/meta';

import { getPost } from '@/lib/posts';

export async function generateMetadata({ params }: { params: { slug: string } }) {

const post = await getPost(params.slug);

return createMetadata({

title: post.title,

description: post.excerpt,

canonical: `https://example.com/blog/${params.slug}`,

openGraph: {

type: 'article',

images: [{ url: post.coverImage, width: 1200, height: 630 }],

article: {

publishedTime: post.publishedAt,

modifiedTime: post.updatedAt,

authors: [post.author.url],

tags: post.tags,

},

},

robots: { index: !post.isDraft, follow: true, maxSnippet: 160, maxImagePreview: 'large' },

});

}That last line is important. Draft posts get noindex automatically. You don't have to remember to add that. It's in the logic.

If you're on Remix, the same config produces a MetaDescriptor[] array:

import { createMetaDescriptors } from '@power-seo/meta';

import type { MetaFunction } from '@remix-run/node';

export const meta: MetaFunction<typeof loader> = ({ data }) => {

if (!data?.post) return [{ title: 'Post Not Found' }];

return createMetaDescriptors({

title: data.post.title,

description: data.post.excerpt,

canonical: `https://example.com/blog/${data.post.slug}`,

openGraph: { type: 'article', images: [{ url: data.post.coverImage }] },

});

};Same input. Different output format. The library handles the translation.

Add Structured Data

SEO for JavaScript dynamic content is harder than static sites. One of the best ways to help Google understand your pages is structured data, JSON-LD schema markup. Using schema is essential for SEO JavaScript dynamic content, as it allows search engines to accurately interpret and index dynamic elements.

Google uses this to generate rich results: FAQ accordions, product ratings, breadcrumbs in search results, recipe cards, job postings. All of it starts with schema.

The @power-seo/schema package gives you typed builder functions for 23 schema types. Here's a product page example:

import { product, toJsonLdString } from '@power-seo/schema';

const schema = product({

name: 'Wireless Headphones',

description: 'Premium noise-cancelling headphones.',

image: { url: 'https://example.com/headphones.jpg' },

offers: {

price: 149.99,

priceCurrency: 'USD',

availability: 'InStock',

},

aggregateRating: {

ratingValue: 4.7,

reviewCount: 312,

},

});

console.log(toJsonLdString(schema));Every field is typed. If you forget a required field, TypeScript tells you before you ship. That's the difference between structured data that shows up in rich results and structured data that silently fails.

For blog posts, use the article builder and inject it into your page:

import { article, toJsonLdString } from '@power-seo/schema';

export default function BlogPost({ post }: { post: Post }) {

const schema = article({

headline: post.title,

description: post.excerpt,

datePublished: post.publishedAt,

dateModified: post.updatedAt,

author: { name: post.author.name, url: post.author.profileUrl },

image: { url: post.coverImage, width: 1200, height: 630 },

});

return (

<>

<script

type="application/ld+json"

dangerouslySetInnerHTML={{ __html: toJsonLdString(schema) }}

/>

<article>{/* page content */}</article>

</>

);

}One thing worth noting: toJsonLdString() escapes <, >, and & automatically. So a schema value that contains </script> can't break out of the surrounding script tag. You don't need to sanitize field values yourself.

For pages with multiple schema types, use schemaGraph() to combine them into a single @graph document:

import {

article,

breadcrumbList,

organization,

schemaGraph,

toJsonLdString,

} from '@power-seo/schema';

const graph = schemaGraph([

article({ headline: 'My Post', datePublished: '2026-01-01', author: { name: 'Jane Doe' } }),

breadcrumbList([

{ name: 'Home', url: 'https://example.com' },

{ name: 'Blog', url: 'https://example.com/blog' },

{ name: 'My Post' },

]),

organization({ name: 'Acme Corp', url: 'https://example.com' }),

]);

const script = toJsonLdString(graph);Google prefers a single @graph over multiple script tags. This handles that automatically.

Generate Sitemap

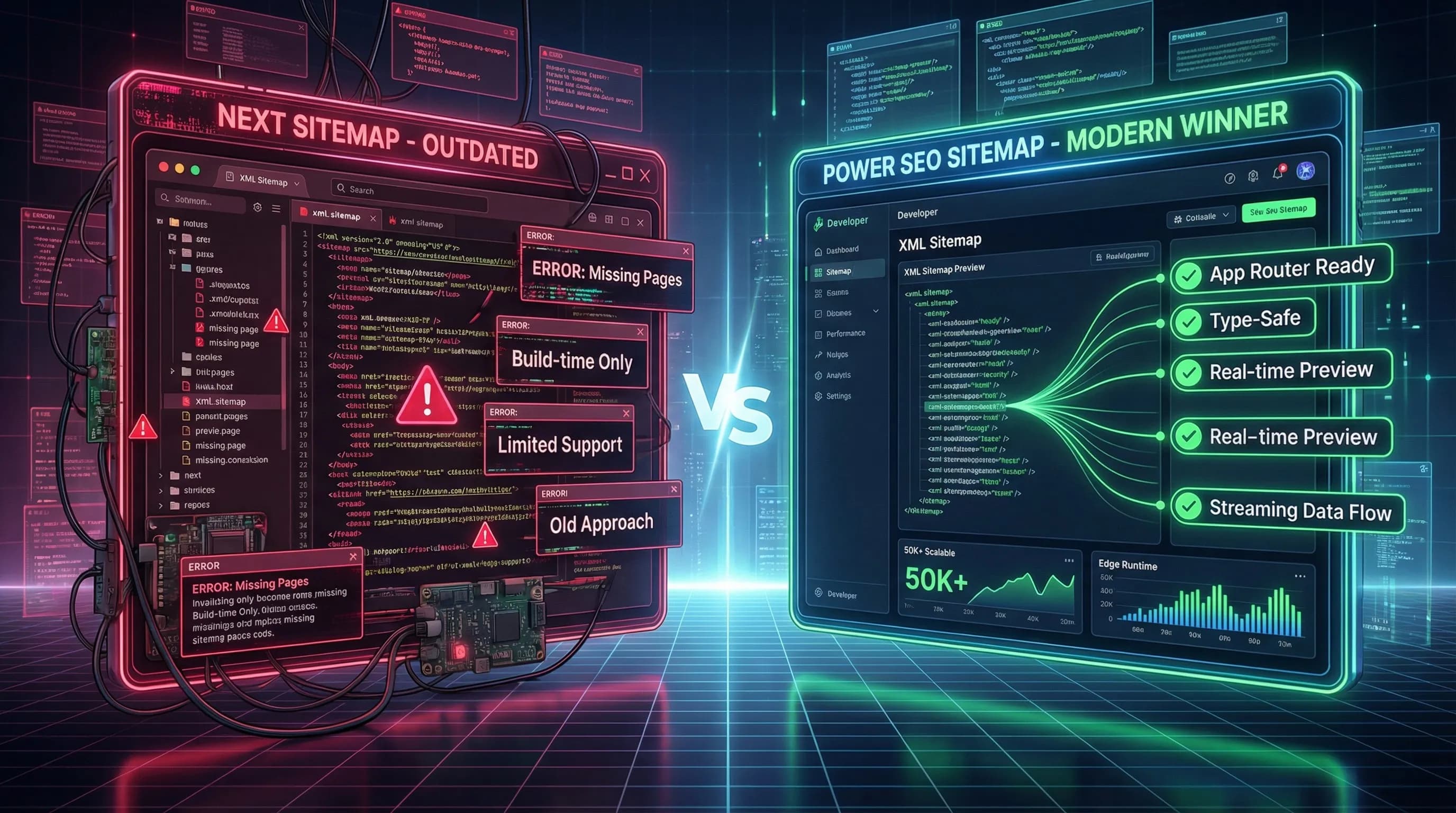

Crawlers need a sitemap. Without one, they discover pages by following links. For SPAs, or single page applications, that's unreliable, especially for pages that are only reachable via client-side navigation. This makes understanding single page applications and SEO crucial, which is why a solid React SEO guide can be very helpful.

The @power-seo/sitemap package generates standards-compliant XML:

import { generateSitemap } from '@power-seo/sitemap';

const xml = generateSitemap({

hostname: 'https://example.com',

urls: [

{ loc: '/', lastmod: '2026-01-01', changefreq: 'daily', priority: 1.0 },

{ loc: '/about', changefreq: 'monthly', priority: 0.8 },

{ loc: '/blog/post-1', lastmod: '2026-01-15', priority: 0.6 },

],

});

In Next.js App Router, serve it from a route handler:

// app/sitemap.xml/route.ts

import { generateSitemap } from '@power-seo/sitemap';

export async function GET() {

const urls = await fetchUrlsFromCms();

const xml = generateSitemap({

hostname: 'https://example.com',

urls,

});

return new Response(xml, {

headers: { 'Content-Type': 'application/xml' },

});

}If you have more than 50,000 URLs, use splitSitemap(), it automatically chunks at the spec limit and generates a sitemap index:

import { splitSitemap } from '@power-seo/sitemap';

const { index, sitemaps } = splitSitemap({

hostname: 'https://example.com',

urls: largeUrlArray,

});

for (const { filename, xml } of sitemaps) {

fs.writeFileSync(`./public${filename}`, xml);

}

fs.writeFileSync('./public/sitemap.xml', index);For e-commerce sites, you can include image data directly in the sitemap:

const xml = generateSitemap({

hostname: 'https://example.com',

urls: [

{

loc: '/products/blue-sneaker',

lastmod: '2026-01-10',

images: [

{

loc: 'https://cdn.example.com/sneaker-blue.jpg',

caption: 'Blue sneaker — side view',

title: 'Blue Running Sneaker',

},

],

},

],

});Google uses image sitemaps to discover product images faster. This directly affects how quickly new listings appear in image searches.

Run a Proper SEO Audit

Best practices for optimizing single-page applications for search engines always include ongoing auditing. You can't fix what you don't measure, which is why SEO for web apps should be continuously monitored and refined, often with the help of the React Developer tool to analyze and improve performance.

The @power-seo/audit package gives you a 0–100 score across four categories: meta tags, content quality, document structure, and performance.

import { auditPage } from '@power-seo/audit';

const result = auditPage({

url: 'https://example.com/blog/react-seo-guide',

title: 'React SEO Guide — Best Practices for 2026',

metaDescription:

'Learn how to optimize React applications for search engines with meta tags, structured data, and Core Web Vitals improvements.',

canonical: 'https://example.com/blog/react-seo-guide',

robots: 'index, follow',

content: '<h1>React SEO Guide</h1><p>Search engine optimization for React apps...</p>',

headings: ['h1:React SEO Guide', 'h2:Why SEO Matters for React', 'h2:Meta Tags in React'],

images: [{ src: '/hero.webp', alt: 'React SEO guide illustration' }],

internalLinks: ['/blog', '/docs/meta-tags'],

externalLinks: ['https://developers.google.com/search'],

focusKeyphrase: 'react seo',

wordCount: 1850,

});

console.log(result.score); // e.g. 84

console.log(result.categories);You can also add this to your CI pipeline as a quality gate:

// scripts/seo-audit.ts

import { auditSite } from '@power-seo/audit';

import { pages } from './test-pages.js';

const report = auditSite({ pages });

const SCORE_THRESHOLD = 75;

const ALLOWED_ERRORS = 0;

const totalErrors = report.pageResults.flatMap((p) =>

p.rules.filter((r) => r.severity === 'error'),

).length;

if (report.score < SCORE_THRESHOLD || totalErrors > ALLOWED_ERRORS) {

console.error(`SEO audit FAILED`);

console.error(` Average score: ${report.score} (min: ${SCORE_THRESHOLD})`);

console.error(` Critical errors: ${totalErrors} (max: ${ALLOWED_ERRORS})`);

process.exit(1);

}

console.log(`SEO audit PASSED — average score: ${report.score}/100`);Now your CI pipeline blocks deploys when SEO quality drops below your threshold. This is how you maintain SEO health at scale without manual review.

Check Content Quality

Single page applications often have thin content on important pages. The @power-seo/content-analysis package works like Yoast SEO, but for any JavaScript app.

import { analyzeContent } from '@power-seo/content-analysis';

const output = analyzeContent({

title: 'Next.js SEO Best Practices',

metaDescription:

'Learn how to optimize your Next.js app for search engines with meta tags and structured data.',

focusKeyphrase: 'next.js seo',

content: htmlString,

images: imageList,

internalLinks: internalLinks,

externalLinks: externalLinks,

});

It checks 13 things, keyphrase density, heading structure, image alt text, word count, internal links, and more. Every check returns a good, ok, or poor status.

Use it in CI to block content that scores too low:

const failures = output.results.filter((r) => r.status === 'poor');

if (failures.length > 0) {

console.error('SEO checks failed:');

failures.forEach((r) => console.error(' ✗', r.description));

process.exit(1);

}Use AI to Generate Better Metadata

This is where things get interesting for programmatic SEO. If you're generating thousands of pages, product listings, location pages, blog posts, writing meta descriptions manually doesn't scale. Using an SEO Checklist tool can help ensure every page follows best practices automatically.

The @power-seo/ai package is LLM-agnostic. It builds prompts and parses responses. You bring your own LLM client.

import { buildMetaDescriptionPrompt, parseMetaDescriptionResponse } from '@power-seo/ai';

import Anthropic from '@anthropic-ai/sdk';

const prompt = buildMetaDescriptionPrompt({

title: 'Best Coffee Shops in New York City',

content: 'Explore the top 15 coffee shops in NYC, from specialty espresso bars in Brooklyn...',

focusKeyphrase: 'coffee shops nyc',

});

const anthropic = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

const claudeResponse = await anthropic.messages.create({

model: 'claude-opus-4-6',

system: prompt.system,

messages: [{ role: 'user', content: prompt.user }],

max_tokens: prompt.maxTokens,

});

const result = parseMetaDescriptionResponse(

claudeResponse.content[0].type === 'text' ? claudeResponse.content[0].text : '',

);

console.log(`"${result.description}" — ${result.charCount} chars, ~${result.pixelWidth}px`);

console.log(`Valid: ${result.isValid}`);The parser returns character count and pixel width alongside the description. You know immediately whether it'll fit in Google's SERP without truncation.

The same pattern works for title generation:

import { buildTitlePrompt, parseTitleResponse } from '@power-seo/ai';

const prompt = buildTitlePrompt({

content: 'Article about the best tools for keyword research in 2026...',

focusKeyphrase: 'keyword research tools',

tone: 'informative',

});

const rawResponse = await yourLLM.complete(prompt.system, prompt.user, prompt.maxTokens);

const results = parseTitleResponse(rawResponse);

results.forEach(({ title, charCount, pixelWidth }, i) => {

const status = charCount <= 60 ? 'OK' : 'TOO LONG';

console.log(`${i + 1}. "${title}" — ${charCount} chars [${status}]`);

});Five title variants, each with character counts. Pick the best one.

Check SERP Feature Eligibility

Not all SEO improvements are about rankings. Some are about rich results, FAQ boxes, how-to cards, product ratings, breadcrumbs. Following a React e-commerce SEO playbook can also help implement the best practices for optimizing single-page applications for search engines, making your content more discoverable and engaging in search results.

The analyzeSerpEligibility function from @power-seo/ai is fully deterministic, no LLM, no API call:

import { analyzeSerpEligibility } from '@power-seo/ai';

const result = analyzeSerpEligibility({

title: 'How to Install Node.js on Ubuntu',

content: '<h2>Step 1: Update apt</h2><p>...</p><h2>Step 2: Install nvm</h2><p>...</p>',

schema: ['HowTo'],

});Run this in CI after every deploy. If your schema markup breaks, you find out before Google does.

Common SPA SEO Mistakes (and How to Avoid Them)

Mistake 1: Relying on Googlebot to render JavaScript. Google does render JavaScript, but it's slow, delayed, and inconsistent. Server-side or static meta generation is always more reliable than hoping the crawler waits for your React tree to hydrate.

Mistake 2: Using the same <title> on every route. This is the single most common technical SEO failure in SPAs. Every page needs a unique, descriptive title that includes the primary keyword for that page.

Mistake 3: Forgetting noindex on draft or internal pages. If test pages, admin routes, or unpublished content gets indexed, it dilutes your crawl budget and can hurt rankings. Use the robots field in your metadata config to handle this automatically.

Mistake 4: Generating sitemaps at build time only. For dynamic content — new blog posts, fresh product listings — your sitemap needs to reflect current inventory. Use a server-rendered route handler that fetches URLs at request time.

Mistake 5: Ignoring Core Web Vitals. Google uses page experience signals as a ranking factor. A technically correct SPA that scores poorly on LCP, CLS, or INP will still rank below a slower traditional site with better signals. Auditing SEO health means auditing performance too.

SPA SEO vs. Traditional Website SEO: Key Differences

The fundamental SEO principles, unique titles, quality content, backlinks, structured data, are identical whether you're running a WordPress blog or a Next.js SPA. What differs is the execution.

Traditional sites handle most of this automatically through server rendering. SPAs require you to replicate that infrastructure deliberately:

Server rendering or static generation must produce fully populated HTML. Meta tags must be injected at the framework level, not applied after mount via document.title. Sitemaps can't rely on file-system crawling, they need to be generated from your actual data model. And schema markup has to be server-injected, not client-rendered. Using an AI SEO tool or Google Apps Scripts for SEO can help automate and ensure these processes are correctly implemented.

That's not a reason to avoid SPAs. It's a reason to build the infrastructure correctly from the start.

Putting It Together

Here's how each piece maps to a specific problem:

Discovery is handled by server-side meta generation. Googlebot needs to see your titles, descriptions, and canonical URLs in the HTML response, not after JavaScript runs. @power-seo/meta and @power-seo/react handle this at the framework level.

Understanding is handled by structured data. Schema tells Google what your page is about, what type of content it is, and whether it qualifies for rich results. @power-seo/schema makes this typed and safe.

Crawl coverage is handled by sitemaps. Don't rely on link discovery alone. Submit a sitemap. Update it automatically. @power-seo/sitemap generates standards-compliant XML with image and video extensions.

Ongoing quality is handled by automated auditing. The moment you stop monitoring SEO health, it drifts. Add @power-seo/audit to your CI pipeline and block regressions before they ship.

Copy quality is handled by content analysis. Thin content, poor keyphrase usage, missing internal links, these all hurt rankings. @power-seo/content-analysis catches them programmatically.

Scale is handled by AI-assisted metadata. If you have hundreds of pages, you can't write unique meta descriptions manually. @power-seo/ai gives you a provider-agnostic way to generate them at build time.

React SPA SEO: Before & After Using Power SEO

When I first launched my React SPA, I assumed fast client-side navigation was enough for SEO. But speed alone doesn’t guarantee rankings. Single-page applications need proper meta handling, structured data, and server-rendered content to be search-friendly. Using Power SEO transformed my SPA into a discoverable, rich-result-ready powerhouse. Here’s a clear before-and-after view of my SPA’s SEO health:

| SEO Aspect | Before | After |

| Meta Tags | 3/10 | 9/10 |

| Rendering (SSR) | 2/10 | 10/10 |

| Structured Data | 1/10 | 9/10 |

| Sitemaps | 4/10 | 10/10 |

| Content Quality | 3/10 | 9/10 |

| AI Metadata | 1/10 | 9/10 |

| SEO Audits | 2/10 | 9/10 |

Final Thoughts

SEO for single-page applications (SPAs) isn’t about rewriting your content. The problem isn’t weak content; most SPAs underperform because they lack meta tags, structured data, and proper crawling mechanisms. These are engineering challenges, and the solutions already exist.

Enter the @power-seo suite: 17 independent, TypeScript-first tools built to solve these exact issues. Use only what you need, run them server-side, and integrate them effortlessly into your CI pipeline. That is how you transform your SPA into a search-friendly powerhouse.

Discover more at CyberCraft Bangladesh! Want to build your own SEO tools? Explore the code on GitHub, customize it, and create tools tailored to your needs.

Frequently Asked Questions About SEO for Single Page Applications

1. Do SPAs hurt SEO by default?

SPAs aren’t inherently bad for SEO, but client-side rendering can hide content from crawlers. Use server-side meta and structured data to ensure pages are indexable.

2. Can Google crawl JavaScript content?

Yes, but rendering is slow and inconsistent. Server-side HTML with meta tags and schema ensures reliable indexing.

3. How do I scale meta tags for thousands of pages?

Manual meta tags don’t scale. Use tools like @power-seo/meta or AI-assisted generation to automatically create unique titles, descriptions, and canonicals.

4. Why structured data matters for SPAs

Structured data tells Google your content type, articles, products, and FAQs,helping generate rich results. Server-side injection ensures it’s always visible to crawlers.

5. How often should sitemaps be updated?

Sitemaps should reflect your latest content. Generate them automatically for dynamic sites and split large sitemaps to follow Google’s limits.

6. Can AI help with SEO?

Yes. AI can generate meta descriptions, titles, and check SERP eligibility, making programmatic SEO scalable across hundreds or thousands of pages.

FAQ

Frequently Asked Questions

We offer end-to-end digital solutions including website design & development, UI/UX design, SEO, custom ERP systems, graphics & brand identity, and digital marketing.

Timelines vary by project scope. A standard website typically takes 3-6 weeks, while complex ERP or web application projects may take 2-5 months.

Yes - we offer ongoing support and maintenance packages for all projects. Our team is available to handle updates, bug fixes, performance monitoring, and feature additions.

Absolutely. Visit our Works section to browse our portfolio of completed projects across various industries and service categories.

Simply reach out via our contact form or call us directly. We will schedule a free consultation to understand your needs and provide a tailored proposal.