React E-commerce SEO Playbook for Maximum Traffic in 2026

When I first started working on E-commerce SEO, I thought shipping my React store was the finish line. Products loaded fast. The UI looked clean. The checkout worked perfectly. I was proud of it. Then I opened Google Search Console.

Half my product pages were not indexed. The ones that were indexed had no meta descriptions showing. None of them had star ratings in search results. Social shares showed blank cards with no images.

All that work. Zero search visibility. That moment taught me something important. Building a React app and building an SEO-ready React app are two completely different things. That’s where React SEO becomes critical, and in e-commerce, that difference costs you real money every single day.

So today I want to share exactly what I've learned. Real patterns. Real tools. Real code. I'll be walking through the @power-seo ecosystem, 17 independently installable TypeScript packages that solve specific SEO problems systematically. Let’s get into it.

Core Problem With React E-commerce SEO

Here's what most developers don't fully understand when they start.This is actually one of the biggest SEO mistakes React developers make. They build a beautiful SPA and assume Google handles everything automatically.

React SPAs render content in the browser using JavaScript. Google can process JavaScript but not perfectly, and not instantly. Googlebot crawls your page, queues it for rendering, and comes back later. Sometimes much later. Sometimes not at all. For a blog, that delay is annoying. For an e-commerce store, it's a disaster.

SEO for single page applications is fundamentally different from traditional server-rendered websites. Every product page is a potential landing page targeting buyer-intent keywords. Every category page is a topical hub that should be driving organic traffic. When Google can't read them properly, you simply don't rank. And if you don't rank, you don't sell.

The specific problems I keep seeing on React e-commerce projects are always the same. Missing or duplicated title tags. No Open Graph metadata for social sharing. Product pages without structured data, so no star ratings appear in search results. Images with empty or useless alt text. No canonical URLs, which creates duplicate content issues. Orphan product pages that no internal link points to. Sitemaps that are either missing entirely or full of broken URLs.

Each of these is fixable. The key is fixing them systematically rather than going page by page, hoping you catch everything. That's exactly what a solid e commerce SEO checklist helps you do. It turns a chaotic process into a repeatable system something every effective e commerce seo checklist is designed to achieve.

Start With Meta Tags

I know. Meta tags sound basic. But I promise you, I still find them broken on sites that have been live for years.

Every single product page needs a unique title tag, a meta description between 120 and 160 characters, and a canonical URL. That's the bare minimum to show up properly in search results.

For Next.js App Router projects, @power-seo/meta plugs directly into the built-in metadata system. You give it one configuration object and it outputs exactly what Next.js needs. No custom boilerplate. No manual mapping.

npm install @power-seo/metaHere's how I wire it up on a dynamic product page:

// app/products/[slug]/page.tsx

import { createMetadata } from '@power-seo/meta';

import { getProduct } from '@/lib/products';

export async function generateMetadata({ params }: { params: { slug: string } }) {

const product = await getProduct(params.slug);

return createMetadata({

title: product.name,

description: product.summary,

canonical: `https://example.com/products/${product.slug}`,

openGraph: {

type: 'website',

images: [{ url: product.image, width: 1200, height: 630, alt: product.name }],

},

robots: { index: !product.isDraft, follow: true, maxSnippet: 160, maxImagePreview: 'large' },

});

}One function call handles everything correctly. Pay attention to maxSnippet and maxImagePreview in that robots config. These tell Google how much of your product description to display in search results and whether to show a large image preview. Almost every developer I've worked with skips these two directives entirely. They make a real difference to how your listings look in the search results page.

Server-Side Rendering Fixes the Indexing Problem

Before we go deeper into individual optimizations, I want to address something foundational.

Server-Side Rendering is the most reliable solution to the React indexing problem. When your pages render HTML on the server before sending it to the browser, Googlebot gets fully formed content on the first request. No JavaScript execution required. No rendering queue delay.

Next.js makes this straightforward. Your generateMetadata() function runs server-side. Your data fetching in server components runs server-side. The HTML that reaches Google already contains your title, description, product information, and structured data.

This is why I default to Next.js for e-commerce React projects. The SSR foundation means you're not fighting Google's JavaScript rendering pipeline on every single page.

If you're working on an existing SPA without SSR, fixing the rendering architecture is the highest-leverage change you can make. Everything else in this article helps. But SSR helps more than all of it combined. It is also the single most important step toward building a truly search engine friendly ecommerce store because Google gets clean, readable HTML from the very first request.

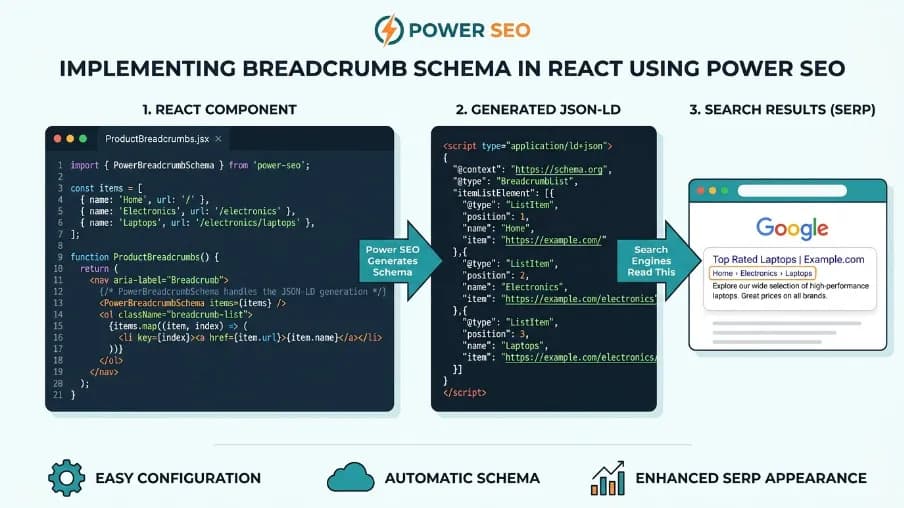

Product Structured Data

This is the part that actually changes your click-through rate. Google can show star ratings, price information, and stock availability directly in search results. These are called rich results. They make your listing stand out visually from every plain text result around it. For businesses focusing on affordable e-commerce SEO, implementing rich results is a powerful strategy. Studies consistently show they improve CTR significantly.

But they only appear when your product pages include correct structured data markup.

@power-seo/schema gives you type-safe JSON-LD builders for 23 different schema types, including Product.

npm install @power-seo/schemaHere's how I add Product schema to a Next.js page:

import { product, toJsonLdString } from '@power-seo/schema';

export default function ProductPage({ productData }) {

const schema = product({

name: 'Wireless Headphones',

description: 'Premium noise-cancelling headphones.',

image: { url: 'https://example.com/headphones.jpg' },

offers: {

price: 149.99,

priceCurrency: 'USD',

availability: 'InStock',

},

aggregateRating: {

ratingValue: 4.7,

reviewCount: 312,

},

});

return (

<>

<script

type="application/ld+json"

dangerouslySetInnerHTML={{ __html: toJsonLdString(schema) }}

/>

<article>{/* page content */}</article>

</>

);

}The toJsonLdString() function automatically escapes <, >, and & characters. So if a schema field value contains something like </script>, it cannot break out of the surrounding script tag. You don't need to sanitize your field values separately. That protection is built in.

If you prefer a cleaner component-based approach, there's a React component for every schema type:

import { ProductJsonLd } from '@power-seo/schema/react';

<ProductJsonLd

name="Wireless Headphones"

description="Premium noise-cancelling headphones."

image={{ url: 'https://example.com/headphones.jpg' }}

offers={{

price: 149.99,

priceCurrency: 'USD',

availability: 'InStock',

}}

aggregateRating={{

ratingValue: 4.7,

reviewCount: 312,

}}

/>One line. It renders the script tag for you.

Validation Step Nobody Does

Here's something I've started treating as non-negotiable on every project.

When structured data has missing required fields, Google ignores it silently. Your rich results disappear with no error message, no warning, nothing. You just lose the feature and wonder what happened.

validateSchema() works like a free SEO audit tool specifically for your structured data. It catches missing fields before the code ever reaches production:

import { product, validateSchema } from '@power-seo/schema';

const schema = product({ name: 'Incomplete Product' }); // missing offers, image

const result = validateSchema(schema);

// result.valid → false

// result.issues → [{ severity: 'error', field: 'offers', message: 'offers is required for Product' }]

if (!result.valid) {

const errors = result.issues.filter((i) => i.severity === 'error');

console.error(`${errors.length} validation error(s) found`);

errors.forEach((i) => console.error(` ✗ [${i.field}] ${i.message}`));

process.exit(1);

}I run this in CI. If schema validation fails, the build fails. Nothing with broken structured data ships to production.

Product Images Are Hurting More Than Expected

Product images are where I see some of the worst SEO problems on e-commerce sites.

There are three distinct image SEO concerns worth addressing separately. Alt text quality. Lazy loading correctness. And image format optimization.

@power-seo/images covers all three.

npm install @power-seo/imagesAlt Text Problems Are Subtle

It's not just about having alt text. It's about having useful alt text.

Filenames used as alt text like IMG_9821 are a common problem I find on product imports. Empty alt attributes on primary product photos. Alt text so short it carries no meaning. Duplicate alt text across multiple product images on the same page. None of these are caught by a basic linter.

import { analyzeAltText } from '@power-seo/images';

const result = analyzeAltText(

[

{ src: '/hero.jpg', alt: '' },

{ src: '/IMG_9821.jpg', alt: 'IMG_9821' },

{ src: '/product.webp', alt: 'Blue widget on white background' },

],

'blue widget',

);

result.issues.forEach((issue) => {

console.log(`[${issue.severity}] ${issue.src}: ${issue.message}`);

});It checks for six specific issue types and flags each one with a severity level.

Core Web Vitals SEO and Lazy Loading Done Wrong

This is the subtle one that trips up a lot of React developers. Core Web Vitals SEO has become a real ranking factor and lazy loading is directly connected to it. Adding loading="lazy" to your hero product image seems logical. But if that image is above the fold, you just delayed LCP. That's a regression that hurts your ranking.

On the flip side, images below the fold without loading="lazy" waste bandwidth on page load.

import { auditLazyLoading } from '@power-seo/images';

const result = auditLazyLoading([

{ src: '/hero.jpg', loading: 'lazy', isAboveFold: true, width: 1200, height: 630 },

{ src: '/section2.jpg', loading: undefined, isAboveFold: false, width: 800, height: 500 },

]);

result.issues.forEach((issue) => {

console.log(`[${issue.severity}] ${issue.title}: ${issue.description}`);

});The audit is CWV-aware. It understands the difference between above-fold and below-fold images and flags each situation correctly.

Product Images Need Their Own Sitemap

If you want your product images to appear in Google Images and drive additional organic traffic, you need an image sitemap with the proper image: namespace extension.

import { generateImageSitemap } from '@power-seo/images';

const sitemapXml = generateImageSitemap([

{

pageUrl: 'https://example.com/products/widget',

images: [

{ src: '/products/widget.jpg', alt: 'Blue widget' },

{ src: '/products/widget-detail.webp', alt: 'Widget detail view' },

],

},

]);Standards-compliant XML output. One function call.

Generate Sitemap Properly

A large e-commerce store might have tens of thousands of product pages, category pages, and filter combinations. Google needs a roadmap to find all of them.

@power-seo/sitemap handles sitemap generation with correct spec compliance.

npm install @power-seo/sitemap

For a Next.js App Router project, I serve the sitemap from a route handler:

// app/sitemap.xml/route.ts

import { generateSitemap } from '@power-seo/sitemap';

export async function GET() {

const urls = await fetchAllProductUrls();

const xml = generateSitemap({

hostname: 'https://example.com',

urls,

});

return new Response(xml, {

headers: { 'Content-Type': 'application/xml' },

});

}When your catalog grows past 50,000 URLs, which the spec doesn't allow in a single sitemap file, splitSitemap() handles the chunking and index generation automatically:

import { splitSitemap } from '@power-seo/sitemap';

const { index, sitemaps } = splitSitemap({

hostname: 'https://example.com',

urls: largeUrlArray,

});

for (const { filename, xml } of sitemaps) {

fs.writeFileSync(`./public${filename}`, xml);

}

fs.writeFileSync('./public/sitemap.xml', index);Sitemap index references all the child sitemaps correctly. You just write the files.

Thin Product Descriptions Are an Invisible Traffic Killer

Google filters out thin content. A product page with a 40-word description is competing against category guides with 1,200 words of useful information. Even with well-optimized meta tags, you won’t win that fight, but with affordable e-commerce SEO, you can enhance your content strategy and boost your chances in search results.

@power-seo/content-analysis runs Yoast-style scoring on your content in TypeScript, outside any CMS.

npm install @power-seo/content-analysis

import { analyzeContent } from '@power-seo/content-analysis';

const output = analyzeContent({

title: 'Best Running Shoes for Beginners',

metaDescription: 'Discover the best running shoes for beginners with our expert guide.',

focusKeyphrase: 'running shoes for beginners',

content: '<h1>Best Running Shoes</h1><p>Finding the right running shoes...</p>',

});

console.log(output.score); // e.g. 38

console.log(output.maxScore); // e.g. 55It runs 13 checks across keyphrase density, heading structure, word count, image alt text, internal and external link presence, and more. Each check returns a status of good, ok, or poor.

I use this in CI to block thin content from shipping:

const failures = output.results.filter((r) => r.status === 'poor');

if (failures.length > 0) {

console.error('SEO checks failed:');

failures.forEach((r) => console.error(' ✗', r.description));

process.exit(1);

}Think of this as your automated SEO checklist running on every single deploy. You define the rules once. The system enforces them forever.

How to Protect Link Equity

E-commerce stores change constantly. Products get discontinued. Categories get restructured. Seasonal collections come and go. Old URLs break.

Every 404 that used to be a product page is a leak. Whatever ranking signals and backlinks that URL had accumulated are gone. A 301 redirect preserves most of that equity and passes it to the new URL.

@power-seo/redirects gives you a typed rule engine you define once and apply across all your frameworks.

npm install @power-seo/redirectsI keep all my rules in one shared file:

// redirects.config.ts

import type { RedirectRule } from '@power-seo/redirects';

export const rules: RedirectRule[] = [

{ source: '/old-products/:id', destination: '/products/:id', statusCode: 301 },

{ source: '/sale/*', destination: '/deals/*', statusCode: 301 },

{ source: '/old-about', destination: '/about', statusCode: 301 },

];

Then I plug that into Next.js:

// next.config.js

const { toNextRedirects } = require('@power-seo/redirects');

const { rules } = require('./redirects.config');

module.exports = {

async redirects() {

return toNextRedirects(rules);

},

};The same rules work in Remix and Express through their own adapters. One source of truth. No duplication. No drift between environments.

Find the Product Pages Nobody Links To

This is the SEO problem that most developers never think to look for.

An orphan page is a page with zero inbound internal links. Googlebot starts crawling from your homepage and follows links. If a product page exists but no other page links to it, Googlebot may simply never find it, even if it's correctly listed in your sitemap.

On large e-commerce stores, orphan pages are incredibly common. Products get added to the catalog but nobody adds them to category pages or related product sections.

@power-seo/links works like a react developer tool but for your site's link structure. It builds a directed link graph in memory and surfaces all the orphan pages you didn't know you had.

npm install @power-seo/links

import { buildLinkGraph, findOrphanPages, analyzeLinkEquity } from '@power-seo/links';

const graph = buildLinkGraph([

{ url: 'https://example.com/', links: ['https://example.com/about', 'https://example.com/blog'] },

{ url: 'https://example.com/about', links: ['https://example.com/'] },

{ url: 'https://example.com/blog', links: ['https://example.com/'] },

{ url: 'https://example.com/orphan-product', links: [] },

]);

const orphans = findOrphanPages(graph);

// [{ url: 'https://example.com/orphan-product', outboundCount: 0 }]Once you know which pages are orphans, you can use suggestLinks() to find existing pages that should be linking to them based on keyword and topic overlap:

import { suggestLinks } from '@power-seo/links';

const suggestions = suggestLinks(pages, { maxSuggestions: 3, minRelevance: 0.15 });

suggestions.forEach(({ from, to, anchorText, relevanceScore }) => {

console.log(`Link from ${from} to ${to}`);

console.log(` Suggested anchor: "${anchorText}" (score: ${relevanceScore.toFixed(2)})`);

});No external NLP library required. Pure TypeScript computation.

Measure Whether Any of This Actually Moves the Needle

Here's something I've started being very direct about with my clients and my team.

We need to know whether improving SEO scores increases organic traffic. Not based on industry averages. Based on our own data.

@power-seo/analytics computes a Pearson correlation coefficient between your audit scores and your click counts from Google Search Console. It tells you empirically whether there's a relationship on your specific site.

npm install @power-seo/analytics

import { mergeGscWithAudit, correlateScoreAndTraffic } from '@power-seo/analytics';

const insights = mergeGscWithAudit(gscPages, auditResults);

const result = correlateScoreAndTraffic(insights);

console.log(`Pearson r: ${result.correlation.toFixed(3)}`);

// e.g. 0.741 — strong positive correlation

if (result.correlation > 0.5) {

console.log('Strong positive: improving audit scores tends to increase traffic');

}It merges GSC data with audit results by normalized URL and runs the math. You get a number you can show to stakeholders. Not a vague claim about best practices. Actual evidence from your own site.

For week-over-week position tracking:

import { trackPositionChanges } from '@power-seo/analytics';

const changes = trackPositionChanges(currentSnapshot, previousSnapshot);

changes.forEach(({ query, previousPosition, currentPosition, change }) => {

const direction = change > 0 ? '↑' : change < 0 ? '↓' : '→';

console.log(`${direction} "${query}": ${previousPosition} → ${currentPosition}`);

});

Clean diff. Every tracked query. Before and after.

Scale Meta Descriptions With an AI SEO Tool

Writing unique, keyword-focused meta descriptions for thousands of product pages manually is not realistic. Most teams either skip it or copy-paste the same template across everything. Neither option is good.

@power-seo/ai is a proper AI SEO tool built for exactly this workflow. It builds the prompts. You call whatever LLM you're already using. It parses the response back into structured data with character count and pixel width validation built in.

npm install @power-seo/ai

import { buildMetaDescriptionPrompt, parseMetaDescriptionResponse } from '@power-seo/ai';

import Anthropic from '@anthropic-ai/sdk';

const prompt = buildMetaDescriptionPrompt({

title: 'Premium Widget',

content: 'Our best-selling widget with free shipping...',

focusKeyphrase: 'premium widget',

});

const anthropic = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

const claudeResponse = await anthropic.messages.create({

model: 'claude-opus-4-6',

system: prompt.system,

messages: [{ role: 'user', content: prompt.user }],

max_tokens: prompt.maxTokens,

});

const result = parseMetaDescriptionResponse(

claudeResponse.content[0].type === 'text' ? claudeResponse.content[0].text : '',

);

console.log(`"${result.description}" — ${result.charCount} chars, ~${result.pixelWidth}px`);

console.log(`Valid: ${result.isValid}`);The prompt builder returns { system, user, maxTokens }. You pass that to your preferred LLM. You get back raw text. The parser turns it into a typed result with character count, pixel width, and a validity flag.

It works identically with OpenAI, Gemini, Mistral, or any other provider. No vendor lock-in. No bundled SDK.

React SEO at scale becomes manageable when you automate the repetitive parts like this. You focus your human attention on strategy and content quality. The AI handles the mechanical generation work.

E-commerce SEO Checklist to Use on Every Project

I want to leave you with something practical you can actually use today.

One of the best things about the @power-seo ecosystem is that these are genuinely affordable e-commerce SEO audit solutions. Every package is open source, independently installable, and built to replace expensive third-party tools with code you actually own and control. These e commerce seo packages give small teams and solo developers access to the same systematic tooling that large engineering teams build internally.

Here's the full e commerce seo checklist I run through on every project:

Meta layer every product page gets a unique title, description, canonical, and robots configuration through @power-seo/meta

Structured data Product schema with offers and aggregate rating on every product page through @power-seo/schema, with validation running in CI to block missing required fields

Image SEO alt text audit, CWV-aware lazy loading check, format recommendations, and a separate image sitemap all handled through @power-seo/images

Sitemap all product and category URLs in a valid sitemap, automatically split into chunks when the catalog exceeds 50,000 URLs, through @power-seo/sitemap

Content quality word count, keyphrase density, and heading structure checked on every page through @power-seo/content-analysis, with CI blocking thin content

Redirects every changed or removed product URL gets a 301 redirect defined in one shared file and applied through @powers-seo/redirects

Internal links orphan page detection and keyword-overlap-based link suggestions run regularly through @power-seo/links

Measurement GSC data merged with audit scores, Pearson correlation computed, position changes tracked through @power-seo/analytics

AI scaling bulk meta description generation for the full product catalog through @power-seo/ai

E-commerce SEO: Before & After Using Power SEO

When I first launched my React store, I thought a fast SPA was enough. But I quickly realized that search engine friendly e-commerce requires more than just working UI and checkout. Using the right e-commerce SEO packages can turn a struggling store into one that ranks, gets indexed, and shows rich results.

Here’s a quick before-and-after comparison with SEO scores:

| SEO Area | Before Power SEO (Score /10) | After Power SEO (Score /10) |

| Meta Tags | 4 | 9 |

| SSR / Rendering | 3 | 10 |

| Structured Data | 2 | 9 |

| Image SEO | 3 | 8 |

| Sitemaps | 5 | 10 |

| Content Quality | 4 | 9 |

| Redirects | 3 | 9 |

| Internal Links | 2 | 8 |

| Measurement | 2 | 9 |

| AI Scaling | 1 | 9 |

Final Thought

React and SEO are not enemies. They never were. But getting them to work well together takes deliberate, systematic effort. It doesn't happen by accident.

The common e-commerce SEO mistakes I described at the start of this article, missing meta tags, broken structured data, orphan pages, lazy loading errors, are all fixable. They're fixable systematically. They're fixable once in a way that keeps working for every future page you publish.

The good news is that the tooling for this has genuinely matured. You don't have to build these systems from scratch anymore. You don't have to figure out the pixel width of a title tag or write your own sitemap splitter or reverse-engineer how Google validates structured data.

The @power-seo ecosystem covers nearly every SEO concern specific to React e-commerce applications. Every one of those 17 packages is independently installable. You take what you need and leave the rest.

You can find everything at Power SEO.

My honest advice: start with meta tags and structured data. Those two give you the fastest visible wins. Then work through the checklist systematically. Run your audits in CI. Measure the impact in your actual traffic data. Iterate based on what the numbers show.

SEO is not a one-time task you check off. It's a system you build once and let run continuously. Put in the work to build that system properly, and it pays you back every single month in organic traffic you didn't have to pay for.

Frequently Asked Questions About E-commerce SEO

- Do I really need SSR for SEO?

Yes, SSR ensures Google sees fully rendered HTML immediately; SPAs alone are unreliable for indexing. - Can I use pre-rendering instead of SSR?

Static generation works for stable products, but dynamic inventory or frequent updates benefit from SSR. - Why aren’t meta tags showing in Google?

Google may not render JS immediately, or pages might have missing canonicals, duplicate titles, or blocking robots. - How do I handle thousands of product meta descriptions?

Use AI-assisted generation and enforce length, pixel width, and keyword rules. - How do I know if structured data is working?

Validate in CI and check with Google Rich Results Test and Search Console. - How do I detect orphan pages?

Use a link graph audit to find pages with zero inbound internal links.

FAQ

Frequently Asked Questions

We offer end-to-end digital solutions including website design & development, UI/UX design, SEO, custom ERP systems, graphics & brand identity, and digital marketing.

Timelines vary by project scope. A standard website typically takes 3-6 weeks, while complex ERP or web application projects may take 2-5 months.

Yes - we offer ongoing support and maintenance packages for all projects. Our team is available to handle updates, bug fixes, performance monitoring, and feature additions.

Absolutely. Visit our Works section to browse our portfolio of completed projects across various industries and service categories.

Simply reach out via our contact form or call us directly. We will schedule a free consultation to understand your needs and provide a tailored proposal.