SEO Mistakes: Fixed Using the Power SEO Toolkit

Mitu Das

super admin

I've seen it happen more times than I can count. You build a technically solid website. Your content is well-written. Your design looks great on every screen. Yet you're not ranking. Or worse, you're ranking for the wrong things, drawing the wrong visitors, or watching pages disappear from Google's index entirely.

The frustrating truth I've learned is that most SEO mistakes aren't caused by one catastrophic error. They come from a lack of a clear SEO strategy and a cluster of smaller, interconnected problems that compound over time. Short descriptions with no keywords, missing alt text, orphaned pages no crawler can find, content that doesn't match user intent. Each SEO mistake individually might cost you a few positions. Together, they represent the top SEO mistakes to avoid if you want your site to stay visible.

In this article, I want to walk you through every one of these SEO mistakes and show you exactly how to fix them using the @power-seo toolkit, a suite of 17 independently installable TypeScript packages built for programmatic, production-grade SEO.

Whether you're running a Next.js app, a Remix site, a headless CMS, or a CI/CD pipeline, these fixes are actionable and code-level. No vague advice from me, I promise. If you're looking for a practical starting point, think of this as a free JavaScript SEO checklist you can implement.

Most Common SEO Mistakes and How to Fix Them

Before I get into the specifics, here's what I've noticed after auditing dozens of sites: most of these SEO mistakes don't show up in isolation. Poor crawlability leads to orphan pages. Orphan pages compound duplicate content problems. Duplicate content dilutes the authority that could have fixed your keyword targeting. Understanding how these SEO mistakes connect to each other is what separates a one-time fix from a permanent solution.

SEO Mistake 1: Ignoring Technical SEO and Poor Crawlability

Of all the SEO mistakes I see, neglecting technical SEO is the one that makes all your other work irrelevant the fastest. It's the infrastructure everything else sits on. Poor crawlability, broken canonical tags, and missing structured data can make high-quality content completely invisible to search engines. When you're ignoring technical SEO, you're essentially building on sand. The first thing I tell anyone who asks me where to start is: address crawl errors and technical issues before you touch anything else.

Poor Crawlability and Missing Sitemaps

If Googlebot can't find your pages, they don't rank. A missing or malformed XML sitemap is one of the most common silent killers of organic traffic I come across. Poor crawlability is often invisible until you check for it deliberately, which is exactly why so many sites suffer from this particular SEO mistake for months or even years.

The fix I recommend: Use @power-seo/sitemap to generate a standards-compliant sitemap automatically.

import { generateSitemap } from '@power-seo/sitemap';

const xml = generateSitemap({

hostname: 'https://example.com',

urls: [

{ loc: '/', lastmod: '2026-01-01', changefreq: 'daily', priority: 1.0 },

{ loc: '/blog/react-seo-guide', lastmod: '2026-01-15', priority: 0.8 },

{ loc: '/products/widget', changefreq: 'weekly', priority: 0.7 },

],

});

For large catalogs that exceed the 50,000-URL spec limit, splitSitemap() handles automatic chunking and generates a sitemap index file with no manual management required.

Broken or Inconsistent Redirect Rules

Redirect chains and missing 301s bleed link equity during site migrations. I see this SEO mistake happen a lot when teams define redirects in multiple places (next.config.js, a CMS, a CDN config). It leads to inconsistencies that are incredibly hard to audit. If you're not using tools for finding broken links regularly, you're flying blind.

The fix: Define your rules once with @power-seo/redirects and apply them to every framework from a single source of truth.

import { createRedirectEngine } from '@power-seo/redirects';

const engine = createRedirectEngine({

rules: [

{ source: '/old-about', destination: '/about', statusCode: 301 },

{ source: '/blog/:slug', destination: '/articles/:slug', statusCode: 301 },

],

});

// Test in CI before deploying

const match = engine.match('/old-about');

// { resolvedDestination: '/about', statusCode: 301 }

The same rule array exports to Next.js (toNextRedirects), Remix (createRemixRedirectHandler), and Express (createExpressRedirectMiddleware), eliminating divergence across environments.

SEO Mistake 2: Neglecting Meta Tags and SERP Presentation

Neglecting meta tags is one of those SEO mistakes that feels minor until you realize how much it's costing you. A page with no meta description, a title that gets cut off in search results, or an Open Graph image with the wrong dimensions will underperform even when it ranks. These are signals both to search engines and to real users deciding whether to click. I can't stress this enough.

Short Descriptions with No Keywords

Google generates its own snippet when a meta description is absent, and in my experience, it rarely picks the right one. Short descriptions with no keywords are just as bad as no description at all. This is one of the most overlooked SEO mistakes because it's so easy to fix and yet so consistently ignored. A well-crafted description that includes your focus keyphrase and a clear call to action can meaningfully improve click-through rate.

The fix: Use @power-seo/meta to generate framework-correct meta tags from one unified config.

// Next.js App Router

import { createMetadata } from '@power-seo/meta';

export const metadata = createMetadata({

title: 'React SEO Best Practices — 2026 Guide',

description:

'Learn the most common SEO mistakes in React apps and how to fix them with structured data, meta tags, and Core Web Vitals improvements.',

canonical: 'https://example.com/blog/react-seo',

robots: { index: true, follow: true, maxSnippet: 150, maxImagePreview: 'large' },

openGraph: {

type: 'article',

images: [{ url: 'https://example.com/og/react-seo.jpg', width: 1200, height: 630 }],

},

});

The same SeoConfig object works for Remix (createMetaDescriptors) and generic SSR (createHeadTags), so you write the configuration once and get the correct output for every framework.

SERP Title Truncation

A title that exceeds Google's roughly 580px display width gets cut off, losing your keyword and your brand. Character count alone is unreliable because different characters have different widths. I've watched perfectly good titles get butchered in the SERPs because of this SEO mistake.

The fix: Use @power-seo/preview to validate pixel-accurate truncation before publishing.

import { generateSerpPreview } from '@power-seo/preview';

const serp = generateSerpPreview({

title: 'How to Fix Every Major SEO Mistake in 2026',

description: 'A practical guide to auditing and correcting the most damaging SEO errors.',

url: 'https://example.com/blog/seo-mistakes',

siteTitle: 'Power SEO',

});

console.log(serp.titleTruncated); // false - safe to publish

console.log(serp.displayUrl); // 'example.com › blog › seo-mistakes'

I recommend using this check in CI to automatically block deployments where auto-generated titles for programmatic pages are too long.

SEO Mistake 3: Poor Keyword Research, Keyword Stuffing, and Over-Optimization

Poor keyword research is where a huge number of SEO efforts fall apart before they even begin, and it's one of the SEO mistakes I see most consistently across all site types. Failing to research and use the right keywords means you end up either targeting the wrong keywords entirely, ranking for irrelevant keywords that bring visitors who immediately bounce, or skipping intent and jumping straight to keywords without thinking about what people actually want to find.

And then on the other end of the spectrum, once someone does find their keywords, I often see them overcorrect into keyword stuffing and over-optimization, which is an active ranking penalty. This is its own serious SEO mistake. The goal is balance: the right keywords, used naturally, on pages built around genuine user intent.

Misaligning Content with Search Intent

Misaligning content with search intent is one of the costliest SEO mistakes you can make. It means focusing on keywords without understanding search intent, and that disconnect destroys engagement. Ranking for "buy running shoes" when your page is a buying guide creates a mismatch: visitors bounce immediately, sending a negative engagement signal to Google. Content that doesn't match user intent will always underperform, no matter how technically well-optimized it looks on paper.

AI-Assisted Meta-Generation

When you're managing hundreds of programmatic pages, writing unique, intent-aligned meta descriptions manually isn't feasible. I've found that LLM-generated suggestions, reviewed before publishing, solve this at scale and eliminate the SEO mistake of publishing identical or near-identical descriptions across hundreds of URLs.

The fix: Use @power-seo/ai to build provider-agnostic prompts and parse structured results.

import { buildMetaDescriptionPrompt, parseMetaDescriptionResponse } from '@power-seo/ai';

import Anthropic from '@anthropic-ai/sdk';

const prompt = buildMetaDescriptionPrompt({

title: 'Best Running Shoes for Beginners',

content: 'Full article text...',

focusKeyphrase: 'running shoes for beginners',

});

const anthropic = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

const response = await anthropic.messages.create({

model: 'claude-opus-4-6',

system: prompt.system,

messages: [{ role: 'user', content: prompt.user }],

max_tokens: prompt.maxTokens,

});

const result = parseMetaDescriptionResponse(

response.content[0].type === 'text' ? response.content[0].text : '',

);

console.log(`"${result.description}" — ${result.charCount} chars`);

console.log(`Valid: ${result.isValid}`);

The same prompt builders work identically with OpenAI, Google Gemini, Mistral, or any other provider. No vendor lock-in, no bundled SDK.

SEO Mistake 4: Poor Content Quality, Thin Pages, and Ignoring Content Updates

Word count alone doesn't make content valuable, but pages under 300 words rarely satisfy the depth of intent behind most search queries. This is a common SEO mistake because thin content is easy to publish quickly and easy to forget about afterward. More damaging is content that technically discusses a topic but scores poorly on keyphrase distribution, heading structure, and readability.

And one thing I see people forget constantly is that publishing is not the finish line. Ignoring content updates is a slow bleed and a SEO mistake that compounds over time. Pages that were ranking well a year ago can drop significantly if the content hasn't been refreshed to reflect current information, especially in fast-moving industries. To focus on E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness), your content needs to demonstrate genuine depth and currency. Stale content signals the opposite.

Running a Yoast-Style Content Audit Programmatically

The fix: Use @power-seo/content-analysis for a full content quality score, comparable to Yoast SEO but available as a TypeScript library that works anywhere. Not using an SEO plugin or equivalent programmatic tooling is itself a SEO mistake, because you lose the automated safety net that catches issues before they go live.

import { analyzeContent } from '@power-seo/content-analysis';

const output = analyzeContent({

title: 'SEO Mistakes Every Developer Should Fix',

metaDescription:

'A developer-focused guide to the most common SEO errors and how to fix them with code.',

focusKeyphrase: 'seo mistakes',

content: pageHtml,

});

console.log(output.score); // e.g. 42

console.log(output.maxScore); // e.g. 55

const failures = output.results.filter((r) => r.status === 'poor');

failures.forEach((r) => console.error(`✗ ${r.description}`));

This runs 13 checks: keyphrase density, keyphrase distribution across headings and intro, title and meta description presence and length, heading hierarchy, word count, image alt text, and internal/external link presence. I use it in CI to gate content publication.

Readability: When Content Is Too Complex for Your Audience

Content that reads at a college level when your audience expects plain English will generate high bounce rates, especially on mobile where reading longer sentences is more demanding. This is also a user experience problem, and ignoring user experience is something Google increasingly penalizes through engagement signals. I've seen this particular SEO mistake tank perfectly optimized pages.

The fix: Use @power-seo/readability to score content across five algorithms.

import { analyzeReadability } from '@power-seo/readability';

const result = analyzeReadability({ text: articleHtml });

console.log(result.fleschReadingEase.score); // Target: 60-70 for web content

console.log(result.overall.status); // 'good' | 'improvement' | 'error'

if (result.overall.status === 'error') {

console.error('Content is too complex:', result.overall.message);

process.exit(1); // Block deploy in CI

}

SEO Mistake 5: Duplicate Content and Missing Canonical Tags

Duplicate content is one of those SEO mistakes that quietly dilutes everything you've built. When the same content appears at multiple URLs (with and without trailing slashes, HTTP vs HTTPS, www vs non-www, or via pagination) search engines split ranking signals across those URLs instead of consolidating them. This dilutes the authority of your best pages, and I see this SEO mistake on nearly every site I audit.

The fix: Canonical tags tell Google which URL is authoritative. Use @power-seo/core to validate and build canonical URLs correctly.

import { resolveCanonical, validateTitle, validateMetaDescription } from '@power-seo/core';

// Normalize all variations to a single canonical

const canonical = resolveCanonical('https://example.com', '/blog/seo-guide');

// 'https://example.com/blog/seo-guide'

// Validate pixel-accurate SERP display before publishing

const titleCheck = validateTitle('SEO Mistakes: Fixed Using the Power SEO Toolkit');

console.log(titleCheck.valid); // true

console.log(titleCheck.pixelWidth); // ~382px - well under 580px limit

const metaCheck = validateMetaDescription(

'Discover the most damaging SEO mistakes and how to fix them with real code examples.',

);

console.log(metaCheck.severity); // 'info' — passes all checks

SEO Mistake 6: Slow Page Load Times and Ignoring Image SEO

Forgetting that faster is better is a SEO mistake that costs you in two ways: rankings and users. Slow page speed is a confirmed Google ranking factor, and slow page load times push real visitors away before they even see your content. Images are consistently among the top contributors to poor Core Web Vitals scores in my experience. Lazy-loading a hero image delays Largest Contentful Paint. Missing alt text harms both accessibility and keyword relevance. And using JPEG when WebP is available inflates page weight unnecessarily.

To prioritize these fixes correctly, I always recommend starting with images because the gains are immediate and measurable. When you optimize images, you're fixing slow page speed, improving Core Web Vitals, and addressing accessibility all at once. This single SEO mistake, when corrected, often produces the most visible performance improvement on a site.

Auditing Image SEO at Scale

The fix: Use @power-seo/images to audit alt text quality, lazy loading correctness, and format optimization across every page.

import { analyzeAltText, auditLazyLoading, analyzeImageFormats } from '@power-seo/images';

const images = [

{ src: '/hero.jpg', alt: '', loading: 'lazy', isAboveFold: true, width: 1200, height: 630 },

{ src: '/product.png', alt: 'IMG_4821', loading: undefined, isAboveFold: false },

{ src: '/logo.webp', alt: 'Power SEO logo', loading: 'eager', isAboveFold: true },

];

// Catch missing alt text, filename-as-alt, and missing keyphrase

const altResult = analyzeAltText(images, 'seo tools');

altResult.issues.forEach((i) => console.log(`[${i.severity}] ${i.message}`));

// Flag hero images incorrectly marked lazy (LCP regression)

const lazyResult = auditLazyLoading(images);

// [error] /hero.jpg: Above-fold image has loading="lazy" - delays LCP

// Recommend WebP/AVIF conversion

const formatResult = analyzeImageFormats(images);

console.log(`Legacy formats: ${formatResult.legacyFormatCount}/${formatResult.totalImages}`);

The lazy loading audit is CWV-aware: it knows the difference between an above-the-fold image that should load eagerly and a below-the-fold image that should be lazy, which is something generic linters miss entirely.

SEO Mistake 7: Poor Internal Linking, Orphan Pages, and Low Quality Backlinks

Poor internal linking and orphan pages are two sides of the same SEO mistake, and they often come with a third companion: low-quality backlinks. Orphan pages (pages that no other page links to) are effectively invisible to search engines crawling your site from the homepage. Internal linking distributes link equity, and pages with zero inbound links accumulate none of it, no matter how good their content is. Meanwhile, chasing low quality backlinks from irrelevant sources to compensate is something Google has gotten very good at discounting or penalizing outright.

I've found orphan pages on sites that had been live for years. The fix for this particular SEO mistake is straightforward once you can see the full picture of your site's link graph.

Finding Orphan Pages and Generating Link Suggestions

The fix: Use @power-seo/links to build a complete link graph and surface orphan pages automatically. This is one of the most practical ways to use tools to fix specific issues systematically rather than guessing.

import { buildLinkGraph, findOrphanPages, suggestLinks, analyzeLinkEquity } from '@power-seo/links';

const graph = buildLinkGraph([

{ url: 'https://example.com/', links: ['/blog', '/about', '/products'] },

{ url: 'https://example.com/blog', links: ['/', '/blog/post-1'] },

{ url: 'https://example.com/blog/post-1', links: ['/blog'] },

{ url: 'https://example.com/hidden-guide', links: [] }, // orphan

]);

const orphans = findOrphanPages(graph);

// [{ url: 'https://example.com/hidden-guide', outboundCount: 0 }]

// PageRank-style equity scoring

const equity = analyzeLinkEquity(graph);

equity.forEach(({ url, score }) => console.log(`${url}: ${score.toFixed(4)}`));

// Keyword-overlap-based link suggestions

const suggestions = suggestLinks(sitePages, { minRelevance: 0.2 });

suggestions.forEach(({ from, to, anchorText }) => {

console.log(`Link from ${from} to ${to} using "${anchorText}"`);

});

I recommend running this after every content publish in a headless CMS to ensure no new page goes live without at least one inbound internal link.

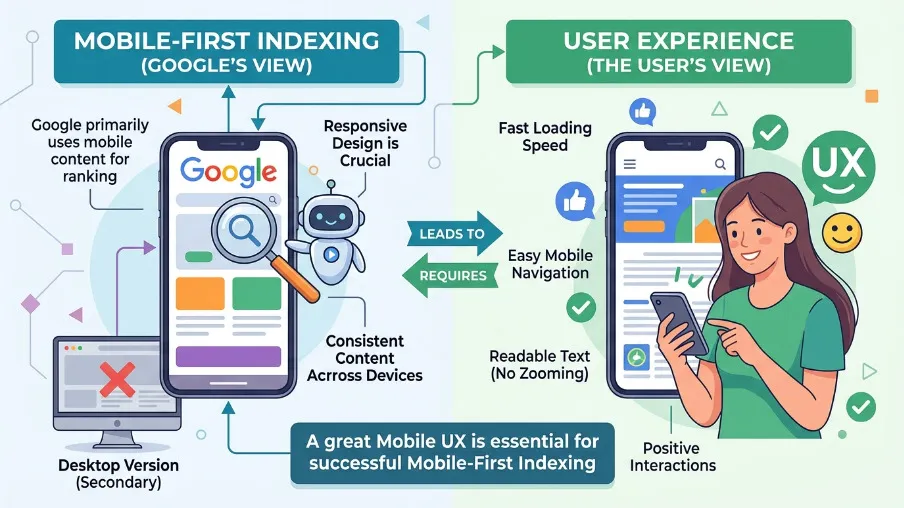

SEO Mistake 8: Ignoring Mobile-First Indexing and User Experience

Ignoring mobile-first indexing in 2026 is not a minor SEO mistake. It's a fundamental misunderstanding of how Google works today. Google crawls and ranks the mobile version of your site first. If your mobile experience is slow, broken, or stripped of content that only appears on desktop, you're being ranked on an inferior version of your own site.

This connects directly to ignoring user experience more broadly, which is its own compounding SEO mistake. Slow page speed on mobile, text that's too small, buttons that are too close together, and layouts that break on smaller screens all send negative engagement signals. Google reads those signals. I think of mobile-first indexing and user experience as two parts of the same requirement: your site has to work well for real people on real devices, or rankings will reflect that failure.

The same @power-seo/readability and @power-seo/images tools I mentioned earlier are your first line of defense here. Readable content, fast-loading images, and correctly sized media all contribute directly to a mobile experience that Google will reward.

SEO Mistake 9: Missing Structured Data and Neglecting Local SEO

Missing structured data and neglecting local SEO are two SEO mistakes that often get deprioritized because their impact isn't immediately visible in rankings. Structured data (JSON-LD) is what unlocks rich results in Google Search: FAQ accordions, product star ratings, recipe cards, HowTo steps, and article carousels. Pages without it compete for basic blue links only. And for businesses with a physical presence or a defined service area, neglecting local SEO is an equally serious gap. Local schema markup (LocalBusiness, ServiceArea, GeoCoordinates) is what connects your structured data strategy to map results and local pack rankings.

Type-Safe JSON-LD with Validation

The fix: Use @power-seo/schema for 23 type-safe schema builders and a CI-ready validation function. When you use tools like this, you're not just adding structured data; you're enforcing correctness automatically so invalid markup never reaches production and creates a new SEO mistake you have to go back and untangle.

import { article, faqPage, breadcrumbList, schemaGraph, validateSchema, toJsonLdString } from '@power-seo/schema';

const graph = schemaGraph([

article({

headline: 'SEO Mistakes: Fixed Using the Power SEO Toolkit',

description: 'A practical guide to auditing and correcting common SEO errors.',

datePublished: '2026-04-21',

author: { name: 'Power SEO Team', url: 'https://example.com/about' },

image: { url: 'https://example.com/og/seo-mistakes.jpg', width: 1200, height: 630 },

}),

faqPage([

{ question: 'What is the biggest SEO mistake?', answer: 'Ignoring technical foundations like sitemaps, canonical tags, and structured data.' },

{ question: 'How do I fix orphan pages?', answer: 'Use a link graph tool to detect pages with zero inbound links and add contextual internal links.' },

]),

breadcrumbList([

{ name: 'Home', url: 'https://example.com' },

{ name: 'Blog', url: 'https://example.com/blog' },

{ name: 'SEO Mistakes' },

]),

]);

// Validate before deployment

const validation = validateSchema(graph);

if (!validation.valid) {

validation.issues.filter(i => i.severity === 'error').forEach(i =>

console.error(`✗ [${i.field}] ${i.message}`)

);

process.exit(1);

}

// Safe to render

const jsonLd = toJsonLdString(graph);

SEO Mistake 10: Not Measuring What Actually Matters

I see many teams run SEO audits in isolation from actual traffic data, and I consider this its own serious SEO mistake. A page can have a perfect audit score and still generate no clicks because it ranks for keywords nobody searches, or its SERP presentation doesn't drive clicks even when it appears. Without measurement, you have no way of knowing whether the SEO mistakes you've fixed are actually moving the needle.

Correlating Audit Scores with Real Traffic

The fix: Use @power-seo/analytics to merge Google Search Console data with audit results and measure whether your corrections to common SEO mistakes are actually driving traffic.

import { mergeGscWithAudit, correlateScoreAndTraffic, buildDashboardData } from '@power-seo/analytics';

const dashboard = buildDashboardData({

gscPages: [

{ url: '/blog/seo-mistakes', clicks: 1240, impressions: 18500, ctr: 0.067, position: 4.2 },

{ url: '/blog/meta-tags', clicks: 380, impressions: 9200, ctr: 0.041, position: 8.7 },

],

gscQueries: [

{ query: 'seo mistakes to avoid', clicks: 820, impressions: 9400, ctr: 0.087, position: 3.1 },

],

auditResults: [

{ url: '/blog/seo-mistakes', score: 88, issues: [] },

{ url: '/blog/meta-tags', score: 44, issues: [] },

],

});

console.log(dashboard.overview.totalClicks); // 1620

console.log(dashboard.overview.averageAuditScore); // 66

// Pearson correlation: does higher audit score lead to more traffic?

const insights = mergeGscWithAudit(dashboard.gscPages, dashboard.auditResults);

const correlation = correlateScoreAndTraffic(insights);

console.log(`Correlation: ${correlation.correlation.toFixed(3)}`); // e.g. 0.741

This answers the question every SEO practitioner asks but most tools can't: does fixing SEO mistakes actually increase organic traffic on your specific site?

Stop Making These SEO Mistakes

SEO mistakes rarely announce themselves. They accumulate quietly. A missing sitemap here, an orphan page there, short descriptions with no keywords written in five minutes three years ago, duplicate content that's been splitting your ranking signals for months, slow page load times nobody noticed because they were testing on fast Wi-Fi. Until the compounding effect of these SEO mistakes becomes hard to undo.

The good news is that most of the top SEO mistakes to avoid are fully programmable. Every fix in this article is code you can write once, run automatically in CI, and enforce across your entire site going forward. You don't need to manually check titles for pixel-accurate truncation, remember to optimize images on every upload, manually track orphan pages, or wonder whether you're misaligning content with search intent. You build the checks once and let them run.

The @power-seo ecosystem gives you a complete, TypeScript-first toolkit for eliminating SEO mistakes at every layer: technical auditing to address crawl errors and technical issues, content analysis to catch thin pages and poor keyword research, structured data validation, image SEO, internal link graphs to eliminate poor internal linking, redirect management, SERP previews, and data correlation. All 17 packages are zero-dependency, independently installable, and tree-shakeable, so you only use what you need.

Prioritize these fixes in order: start with @power-seo/audit to get a baseline score and see which SEO mistakes are hurting you most. Then fix meta tags and short descriptions with no keywords, add structured data, resolve orphan pages, optimize images, and handle duplicate content. Measure the impact with @power-seo/analytics. Repeat.

npm install @power-seo/audit @power-seo/schema @power-seo/links @power-seo/images

The sites that rank consistently well aren't the ones that got lucky with one viral piece of content. They're the ones that developed a clear SEO strategy, identified their SEO mistakes early, fixed them systematically, and never stopped paying attention to the signals that matter.

Frequently Asked Questions About SEO Mistakes

Q: What is the single biggest SEO mistake most websites make?

Neglecting technical SEO and ignoring poor crawlability. If Googlebot can't crawl your pages, discover them via a sitemap, or follow redirect chains correctly, no amount of content optimization will help. This is the foundational SEO mistake that makes everything else harder. Address crawl errors and technical issues first, before you touch anything else.

Q: How do I find orphan pages on my site?

Build a directed link graph from your site's pages and find nodes with zero inbound links. The @power-seo/links package does this in memory with findOrphanPages(graph), and no external crawling tool is required. Cross-reference the results with your sitemap to prioritize fixes. Orphan pages are one of those SEO mistakes that are completely invisible without the right tooling.

Q: Does keyword density still matter in 2026?

It matters in the sense that poor keyword research and zero mentions of your focus keyphrase are clear signals of misalignment. But keyword stuffing and over-optimization, forcing a keyword in unnaturally, is itself a serious SEO mistake and an active ranking penalty. Target 0.5 to 2.5% density with natural placement in the title, first paragraph, and subheadings. And always make sure you're matching content with search intent, not just hitting a number.

Q: How do I know if my structured data is valid before publishing?

Use validateSchema() from @power-seo/schema. It checks required fields without throwing and returns structured { valid, issues } objects that are safe to run in CI pipelines. Shipping invalid structured data is one of those SEO mistakes that's completely preventable with the right tooling. Google's Rich Results Test is useful for visual verification afterward.

Q: What's the fastest way to audit a site for SEO mistakes?

Use auditSite() from @power-seo/audit. Pass an array of page inputs and get a 0 to 100 aggregate score, per-category breakdowns (meta, content, structure, performance), and a prioritized issue list, entirely local with no external API calls required. This is the best starting point for anyone who wants to use tools to fix specific SEO mistakes in a structured, prioritized way.

Q: What does it mean to focus on E-E-A-T?

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. It's Google's framework for evaluating content quality, and ignoring it is one of the longer-term SEO mistakes that gradually erodes rankings. In practice, it means writing content that demonstrates real knowledge, citing credible sources, keeping information updated so you're not ignoring content updates, and making sure your author and organization information is clearly visible on the page.

FAQ

Frequently Asked Questions

We offer end-to-end digital solutions including website design & development, UI/UX design, SEO, custom ERP systems, graphics & brand identity, and digital marketing.

Timelines vary by project scope. A standard website typically takes 3-6 weeks, while complex ERP or web application projects may take 2-5 months.

Yes - we offer ongoing support and maintenance packages for all projects. Our team is available to handle updates, bug fixes, performance monitoring, and feature additions.

Absolutely. Visit our Works section to browse our portfolio of completed projects across various industries and service categories.

Simply reach out via our contact form or call us directly. We will schedule a free consultation to understand your needs and provide a tailored proposal.