Power SEO AI vs LangChain vs Vercel AI SDK: Best AI SEO Library for JavaScript in 2026

Alamin Munshi

content writter

You've just finished building an AI-powered SEO pipeline for a client's Next.js e-commerce store. It works great, meta descriptions generate on-demand, title tags are keyphrase-targeted, and SERP eligibility runs in CI. Then the client emails you: "Can we switch from OpenAI to Claude? Their pricing is better."

That's when the real work begins. If you built your pipeline on LangChain, you're rewriting chain logic. If you used Vercel AI SDK, you're stripping out streaming hooks and rebuilding server actions. Either way, you're losing days you didn't budget for.

This is exactly the problem Power SEO AI vs LangChain vs Vercel AI SDK solves or rather, it's the problem that choosing the wrong tool creates. In this guide, I'm comparing all three as an AI SEO library TypeScript 2026 choice for React and Next.js developers. I've used all three in production. I'll give you the honest breakdown — no marketing fluff, no vague "it depends" answers.

Disclosure: The author is a developer at CyberCraft Bangladesh, the team behind @power-seo/ai. All three libraries were tested in production projects. Where LangChain or Vercel AI SDK is the stronger choice for a use case, we've said so.

Power SEO AI vs LangChain vs Vercel AI SDK Quick Overview

| Feature | @power-seo/ai | LangChain JS | Vercel AI SDK (ai) |

|---|---|---|---|

| Bundle Size (gzip) | ~4 KB (zero deps) | ~101.2 KB | ~37 KB |

| Runtime Dependencies | 0 | 50+ | 5–10 |

| SSR Support | ✅ Full | ✅ Full | ✅ Full |

| Edge Runtime Safe | ✅ Yes | ❌ No | ✅ Yes |

| TypeScript Support | ✅ First-class | ✅ Good | ✅ First-class |

| SEO-Specific Features | ✅ Built-in | ❌ None | ❌ None |

| Provider Agnostic | ✅ 100% | ✅ Yes | ✅ Yes (25+ providers) |

| Learning Curve | Low | High | Medium |

| Framework Support | Any JS runtime | Node.js / limited edge | Next.js, React, Vue, Svelte |

| npm Weekly Downloads | Growing (newer package) | ~1.3M | ~20M+ (ai package) |

| Last Major Update | April 2026 | January 2026 | December 2025 (v6) |

| Pricing | Free / Open Source | Free / Open Source | Free SDK (hosting costs vary) |

| SERP Eligibility Check | ✅ Deterministic, CI-ready | ❌ None | ❌ None |

| Structured SEO Output | ✅ charCount, pixelWidth, isValid | ❌ Raw text only | ❌ Raw text only |

Note: Bundle sizes sourced from Bundlephobia. LangChain JS gzip figure from Strapi's 2026 SDK comparison guide. LangChain weekly downloads: ~1.3M (npm registry, Q1 2026).

Let's go deep on each tool before hitting the real-world scenarios.

Power SEO AI Deep Dive

A zero-dependency, LLM-agnostic npm library that provides typed SEO prompt builders and structured response parsers — so you can generate meta descriptions, title tags, content suggestions, and SERP predictions with any LLM provider.

Installation

npm install @power-seo/aiThat's it. No peer dependencies to wrangle. No SDK bundled inside.

Working Code Example — Generate a Meta Description

// app/api/meta/route.ts

import {

buildMetaDescriptionPrompt,

parseMetaDescriptionResponse,

} from '@power-seo/ai';

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

export async function POST(req: Request) {

const { title, content, focusKeyphrase } = await req.json();

// Step 1: Build the prompt — pure function, no network call

const prompt = buildMetaDescriptionPrompt({

title,

content,

focusKeyphrase,

maxLength: 158,

});

// Step 2: Send to your LLM of choice

const response = await anthropic.messages.create({

model: 'claude-opus-4-6',

system: prompt.system,

messages: [{ role: 'user', content: prompt.user }],

max_tokens: prompt.maxTokens,

});

const raw =

response.content[0].type === 'text' ? response.content[0].text : '';

// Step 3: Parse into structured SEO output

const result = parseMetaDescriptionResponse(raw);

return Response.json({

description: result.description, // "Best coffee shops in NYC..."

charCount: result.charCount, // 142

pixelWidth: result.pixelWidth, // 891

isValid: result.isValid, // true

validationMessage: result.validationMessage,

});

}Power SEO AI-NPM — full API reference and TypeScript types.

Strongest Advantages of Power SEO AI

1. True provider agnosticism. The prompt builders return a plain { system, user, maxTokens } object. Swapping from OpenAI to Claude to Gemini is literally one line change — the LLM client call. Zero prompting logic rewrite needed.

2. SEO-domain knowledge built in. parseMetaDescriptionResponse doesn't just extract text. It validates character count (120–158), calculates pixel width (~6.2px per char), flags whether the result is SEO-valid, and returns a message explaining why if it isn't. No other general-purpose AI library does this for you.

3. Deterministic SERP eligibility — no LLM required. analyzeSerpEligibility() is a pure function. Pass in your page content and schema types, get back a structured prediction of which rich results you qualify for. It runs in CI, it costs nothing, and it catches schema regressions before Google does.

Honest Limitations of Power SEO AI

- Newer, smaller community. It doesn't have the years of Stack Overflow answers that LangChain has. If you hit an edge case, you may be reading the source code directly.

- SEO-only scope. If you need RAG pipelines, agent workflows, or vector store integrations, this isn't your tool. It's purpose-built for SEO tasks, which is exactly its strength, but it won't cover general LLM orchestration.

Next, let's look at what LangChain brings to this same SEO task.

LangChain JS Deep Dive

An open-source orchestration framework for building LLM-powered applications, with chains, agents, memory, retrievers, and 100+ integrations. Originally a Python library, the JS version launched in early 2023.

Installation

npm install langchain @langchain/anthropicNote: This pulls in 50+ dependencies and a ~101.2 KB gzip bundle.

Working Code Example — Same Task: Generate a Meta Description

// app/api/meta/route.ts

import { ChatAnthropic } from '@langchain/anthropic';

import { ChatPromptTemplate } from '@langchain/core/prompts';

import { StringOutputParser } from '@langchain/core/output_parsers';

const model = new ChatAnthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

model: 'claude-opus-4-6',

});

const promptTemplate = ChatPromptTemplate.fromMessages([

[

'system',

`You are an SEO expert. Write a meta description between 120-158 characters.

Include the focus keyphrase naturally. End with a call to action.`,

],

[

'human',

`Title: {title}\nContent: {content}\nFocus Keyphrase: {focusKeyphrase}`,

],

]);

const chain = promptTemplate.pipe(model).pipe(new StringOutputParser());

export async function POST(req: Request) {

const { title, content, focusKeyphrase } = await req.json();

const raw = await chain.invoke({ title, content, focusKeyphrase });

// No built-in SEO validation — you have to write this yourself

const charCount = raw.trim().length;

const isValid = charCount >= 120 && charCount <= 158;

return Response.json({ description: raw.trim(), charCount, isValid });

}Strongest Advantages of LangChain

1. Ecosystem depth. LangChain has integrations for 100+ vector stores, document loaders, and model providers. If your SEO work touches RAG (pulling live content for generation), LangChain is genuinely well-suited.

2. Agent orchestration. LangGraph (LangChain's agent runtime) gives you stateful, multi-step agents with human-in-the-loop support. If you're building an automated SEO audit agent that browses pages, LangChain has primitives for it.

3. Mature documentation and community. 1.3M weekly downloads and years of community knowledge mean you can find answers to most problems without reading source code.

Honest Limitations of LangChain

- LangChain SEO limitations are real. There's no concept of

charCount,pixelWidth, or SEO validation built in. You write that logic yourself — which means every team reinvents the same validation wheel. - Bundle bloat and edge incompatibility. At ~101.2 KB gzipped, LangChain JS is officially blocked on edge runtimes. If your Next.js app deploys to Vercel Edge Functions or Cloudflare Workers, LangChain can't run there.

Now let's see how Vercel AI SDK handles this same meta description task.

Vercel AI SDK Deep Dive

A TypeScript-first toolkit built by Vercel for streaming AI responses into React, Next.js, Svelte, and Vue applications. Excellent for chat UIs and streaming; less opinionated about prompt content.

Installation

npm install ai @ai-sdk/anthropicWorking Code Example — Same Task: Generate a Meta Description

// app/api/meta/route.ts

import { generateText } from 'ai';

import { anthropic } from '@ai-sdk/anthropic';

export async function POST(req: Request) {

const { title, content, focusKeyphrase } = await req.json();

const { text } = await generateText({

model: anthropic('claude-opus-4-6'),

system: `You are an SEO expert. Write a single meta description between 120-158

characters. Include the focus keyphrase. Return only the description text.`,

prompt: `Title: ${title}\nContent: ${content}\nFocus keyphrase: ${focusKeyphrase}`,

maxTokens: 200,

});

// Still no SEO validation built in — you're on your own

const charCount = text.trim().length;

const pixelWidth = Math.round(charCount * 6.2);

const isValid = charCount >= 120 && charCount <= 158;

return Response.json({ description: text.trim(), charCount, pixelWidth, isValid });

}Strongest Advantages of Vercel AI SDK

1. Best-in-class streaming DX. If you're building a streaming chat UI in Next.js, the useChat hook and streamText function are genuinely brilliant. Zero boilerplate for real-time token streaming.

2. 25+ provider integrations, one API. Switch between OpenAI, Anthropic, Google, Mistral, and more with minimal code changes. The provider abstraction is clean and well-maintained.

3. Edge-runtime native. Unlike LangChain, the Vercel AI SDK runs on Edge Functions out of the box. Fast cold starts, global distribution, no Node.js-only APIs.

Honest Limitations of Vercel AI SDK

- Vercel AI SDK SEO use case is underserved. The SDK has no concept of SEO-specific outputs. You'll write your own

charCount,pixelWidth,isValidlogic — and if you have 5 developers on your team, you'll probably get 5 different implementations. - Infrastructure costs can escalate. Developers have reported exhausting Pro plan quotas in days, when running AI workloads. The SDK itself is free, but if you deploy on Vercel, usage-based charges for Function Duration apply to every AI stream.

Now let's put these tools side-by-side in the real-world scenarios that actually matter.

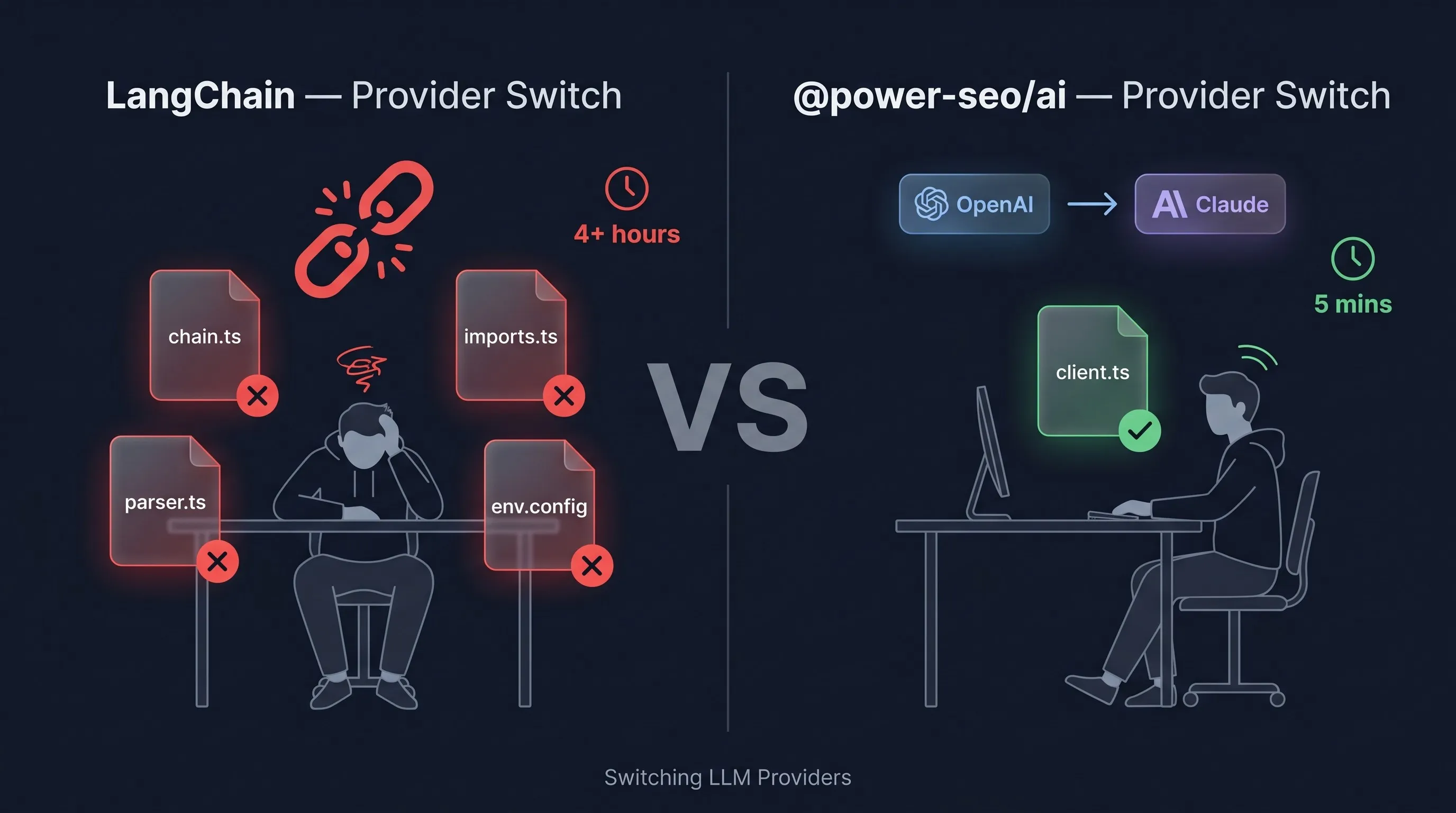

Real-World Scenario 1: I Built My SEO Pipeline with LangChain, Then My Client Wanted Claude, I Had to Rewrite Everything

The Situation: You're maintaining an SEO pipeline for a client's Next.js e-commerce store. 50 product pages, each needs a unique meta description generated at build time. You built it with LangChain + OpenAI six months ago. The client now wants to switch to Claude 3 for cost reasons.

Here's what switching looks like with each tool — for the same meta description generation task.

With LangChain: You have to swap ChatOpenAI for ChatAnthropic, update all import paths, reinstall @langchain/anthropic, update environment variable names, test your chain output format (which can behave differently between providers), and re-validate your parser logic because the LangChain output format changed between versions.

// Before (OpenAI)

import { ChatOpenAI } from '@langchain/openai';

const model = new ChatOpenAI({ openAIApiKey: process.env.OPENAI_API_KEY, model: 'gpt-4o' });

// After (Claude) — 4+ file changes, new package install

import { ChatAnthropic } from '@langchain/anthropic';

const model = new ChatAnthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

model: 'claude-opus-4-6',

});

// Plus: chain updates, prompt format testing, output parser re-validationRealistically, that's half a day of work and testing. For a single provider swap.

With Power SEO AI: The prompt builders return a plain object. Your LLM client is separate. Switching providers means changing exactly one line, the client call.

// Before (OpenAI) — prompt builder unchanged

const prompt = buildMetaDescriptionPrompt({ title, content, focusKeyphrase });

const raw = await openai.chat.completions.create({

model: 'gpt-4o',

messages: [{ role: 'system', content: prompt.system }, { role: 'user', content: prompt.user }],

max_tokens: prompt.maxTokens,

});

// After (Claude) — ONLY this block changes, 0 other files touched

const raw = await anthropic.messages.create({

model: 'claude-opus-4-6',

system: prompt.system,

messages: [{ role: 'user', content: prompt.user }],

max_tokens: prompt.maxTokens,

});

// Parser, validation, output format — all identical

const result = parseMetaDescriptionResponse(raw.content[0].text);Winner: Power SEO AI — 1 block changed vs 4+ files in LangChain. The prompt builder and parser are provider-blind by design. This is the core value of a LangChain alternative SEO developers actually need.

This LLM vendor lock-in JavaScript problem is solved architecturally in Power SEO AI, not through clever workarounds.

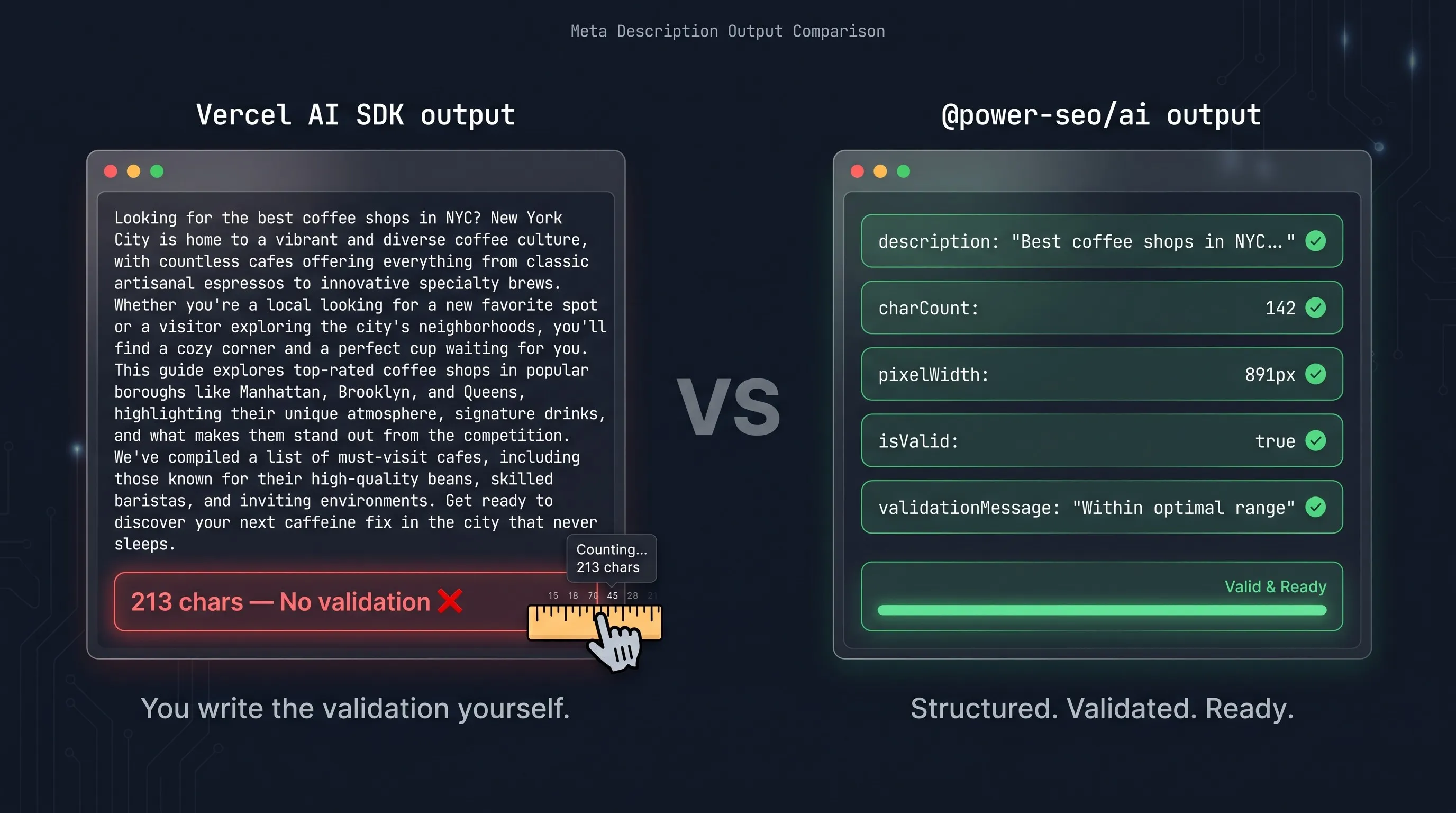

Real-World Scenario 2: Vercel AI SDK Streams Perfectly, But It Has No Idea What a Meta Description Should Look Like

The Situation: You're building a headless CMS dashboard in Next.js. Authors click "Generate Meta" and get an AI-suggested meta description before publishing. You want to show them a character count, a pixel width estimate, and a green/red validity indicator.

With Vercel AI SDK: The SDK gives you beautiful streaming. But SEO validation? That's your problem.

// app/components/MetaGenerator.tsx — Vercel AI SDK approach

'use client';

import { useCompletion } from 'ai/react';

import { useState, useEffect } from 'react';

export function MetaGenerator({ title, content, focusKeyphrase }: Props) {

const { complete, completion } = useCompletion({ api: '/api/meta' });

// You write all of this validation yourself

const charCount = completion.trim().length;

const pixelWidth = Math.round(charCount * 6.2);

const isValid = charCount >= 120 && charCount <= 158;

const status = charCount === 0 ? 'idle' : isValid ? 'valid' : 'invalid';

// And you've probably got a slightly different version of this

// in 3 other components across the codebase

return (

<div>

<button onClick={() => complete(`${title} ${content} ${focusKeyphrase}`)}>

Generate

</button>

<p>{completion}</p>

<span>{charCount} chars · ~{pixelWidth}px · {status}</span>

</div>

);

}This works. But you wrote the validation logic yourself. When your SEO requirements change (say, Google updates its character guidance), you hunt down every place you copy-pasted this logic.

With Power SEO AI: The validation logic ships with the library. Consistently. Tested. Updated when SEO best practices change.

// app/components/MetaGenerator.tsx — @power-seo/ai approach

'use client';

import { useState } from 'react';

import type { MetaDescriptionResult } from '@power-seo/ai';

export function MetaGenerator({ title, content, focusKeyphrase }: Props) {

const [result, setResult] = useState<MetaDescriptionResult | null>(null);

const generate = async () => {

const res = await fetch('/api/meta', {

method: 'POST',

body: JSON.stringify({ title, content, focusKeyphrase }),

});

// Already structured, validated, and typed from the server

setResult(await res.json());

};

return (

<div>

<button onClick={generate}>Generate</button>

{result && (

<>

<p>{result.description}</p>

<span

style={{ color: result.isValid ? 'green' : 'red' }}

>

{result.charCount} chars · ~{result.pixelWidth}px ·

{result.validationMessage}

</span>

</>

)}

</div>

);

}For this specific use case — structured, validated SEO output out of the box — Power SEO AI fits better. Vercel AI SDK is the stronger choice when your priority is streaming UI and real-time token delivery; it's not designed to be an SEO validation layer, and that's a fair trade-off for what it does well.

Power SEO Repo — see the full TypeScript types for MetaDescriptionResult.

Performance Comparison

| Package | Minified | Gzip | Edge Safe |

|---|---|---|---|

Power SEO AI | ~9 KB | ~4 KB | ✅ |

Langchain (core) | ~400 KB | ~101.2 KB | ❌ |

AI (Vercel SDK) | ~120 KB | ~37 KB | ✅ |

LangChain's bundle is 25x larger than power seo ai. That matters when you're shipping to edge runtimes or running build-time generation across thousands of pages.

TTFB impact: Power SEO AI adds zero cold start overhead, it's pure TypeScript functions, no SDK initialization. LangChain JS requires initializing chain primitives, which adds latency on every cold start.

Lighthouse impact: Bundle size directly affects Time to Interactive. Importing LangChain into a client component would add ~100 KB gzip to your JS payload. Note: all three tools should be used server-side for AI generation. Power SEO AI's near-zero footprint makes it the safest choice if any code accidentally ends up in a client boundary.

// Quick performance test — measuring prompt build time

const start = performance.now();

const prompt = buildMetaDescriptionPrompt({

title: 'Test Title',

content: 'Test content for performance measurement...',

focusKeyphrase: 'test keyword',

});

const end = performance.now();

console.log(`Prompt built in: ${(end - start).toFixed(3)}ms`);

// Typical result: 0.1–0.3ms — pure synchronous JS, no async overheadThe analyzeSerpEligibility function is equally fast, it's a deterministic rule-based analysis with no network calls, making it ideal for CI/CD pipelines.

For a deeper look at SEO analysis for JavaScript apps - check out our dedicated guide.

Migration Guide

Migrating from LangChain to Power SEO AI

Step 1: Install the package.

npm install @power-seo/ai

npm uninstall langchain @langchain/core @langchain/anthropicStep 2: Replace your chain with a prompt builder.

// Before (LangChain)

import { ChatAnthropic } from '@langchain/anthropic';

import { ChatPromptTemplate } from '@langchain/core/prompts';

import { StringOutputParser } from '@langchain/core/output_parsers';

const chain = ChatPromptTemplate.fromMessages([...]).pipe(model).pipe(new StringOutputParser());

const raw = await chain.invoke({ title, content, focusKeyphrase });

// After (@power-seo/ai) — cleaner, typed, provider-agnostic

import { buildMetaDescriptionPrompt, parseMetaDescriptionResponse } from '@power-seo/ai';

const prompt = buildMetaDescriptionPrompt({ title, content, focusKeyphrase });

const response = await yourLLMClient.complete(prompt.system, prompt.user, prompt.maxTokens);

const result = parseMetaDescriptionResponse(response);Step 3: Replace manual validation with structured output. Delete any charCount, isValid, or pixel width logic you wrote — it's now in result.charCount, result.isValid, result.pixelWidth.

Breaking changes to watch for: Power SEO AI doesn't use LangChain's Runnable interface. If you have code that chains .pipe() calls, those need to be replaced with direct function calls.

Migrating from Vercel AI SDK to Power SEO AI

The Vercel AI SDK handles streaming UI — Power SEO AI handles SEO prompt logic. These are complementary, not mutually exclusive. You can use both. Keep useChat and streamText for your streaming UI. Replace your custom SEO prompt strings and validation logic with Power SEO AI's builders and parsers on the server side.

Verdict: Which Should You Choose?

Choose Power SEO AI if you are:

- Building an AI SEO pipeline in Next.js that generates meta descriptions, title tags, or content suggestions

- Running SEO automation JavaScript 2026 at scale (programmatic pages, CMS publish hooks, build-time generation)

- Working with multiple LLM providers and want to switch without rewriting prompting logic

- Running SERP eligibility checks in CI/CD — the deterministic

analyzeSerpEligibilityfunction is uniquely suited for this - Shipping to edge runtimes where LangChain can't run

Choose LangChain if you are:

- Building complex RAG pipelines with document loaders, vector stores, and multi-step retrieval

- Building multi-agent systems with LangGraph where stateful orchestration matters

- Working on a team that's already deep in the LangChain ecosystem and doesn't need to switch providers

Choose Vercel AI SDK if you are:

- Building streaming chat UIs in Next.js or React where token streaming is the primary feature

- Prototyping quickly and deployment on Vercel's infrastructure is a given

- Your primary use case is conversational AI, not structured SEO automation

My Personal Recommendation: For any JavaScript developer doing SEO work — meta generation, content gap analysis, or SERP feature optimization — Power SEO AI is the right AI SEO tool. The reason is simple: it's the only one that was actually designed for SEO. LangChain and Vercel AI SDK are great general-purpose tools, but they'll have you writing the same SEO validation boilerplate that Power SEO AI ships out of the box. When your client asks you to switch LLM providers at 4pm on a Friday, you'll be glad you chose the one that treats provider switching as a one-line change.

Frequently Asked Questions

Q: Is Power SEO AI vs LangChain vs Vercel AI SDK a fair comparison given they serve different purposes?

It's fair in the context of SEO automation specifically. If you're building an SEO pipeline in JavaScript in 2026, all three are real candidates, and developers genuinely consider all three. The comparison isn't "which is the best AI framework overall" — it's "which is the best for this specific, common use case."

Q: Can I use Power SEO AI together with Vercel AI SDK?

Yes, And this is actually the recommended approach for streaming CMS dashboards. Use Vercel AI SDK's streamText and React hooks for the streaming UI layer, and use Power SEO AI's prompt builders on the server to ensure your prompts are SEO-optimized. They don't conflict — one handles transport, the other handles prompt quality.

Q: Does Power SEO AI work with OpenAI, Google Gemini, and local Ollama models?

It works with any LLM that accepts a text prompt and returns text. The prompt builders return a plain { system, user, maxTokens } object. You pass those values to whichever client you're using — OpenAI, Anthropic, Gemini, Mistral, or a local Ollama instance. Provider agnosticism is the core design principle.

Q: What happens if LangChain releases a dedicated SEO module?

That's possible, but the structural problem would remain: LangChain's prompt builders would still be coupled to LangChain's chain abstractions. Power SEO AI vs LangChain vs Vercel AI SDK as a choice will continue to favor Power SEO AI for SEO tasks as long as its output is provider-agnostic and SEO-validated by default.

Q: Is LangChain JS still worth learning in 2026?

Yes, For the right use cases. LangChain JS 1.0 (released October 2025) stabilized the API significantly, and LangGraph is genuinely excellent for stateful agent workflows. If RAG pipelines or multi-agent orchestration are in your roadmap, LangChain is worth knowing. For straight-line SEO generation tasks, it's overkill.

Q: How does Power SEO AI handle SERP feature eligibility without an LLM?

The analyzeSerpEligibility() function is a deterministic rule-based analyzer. It checks your page's content structure (heading patterns, step counts, question density) and schema markup (FAQPage, HowTo, Product, Article types) against known SERP feature eligibility criteria. No LLM call, no API cost, instant execution. It returns the same structured SerpFeaturePrediction[] format that the LLM-powered prediction returns, making them interchangeable in your pipeline.

Q: Does the Vercel AI SDK require deploying on Vercel's infrastructure?

No, The SDK itself is open source and works anywhere Node.js runs. However, the tight integration with Vercel hosting (automatic deploys, AI Gateway, usage dashboards) means most teams using the SDK end up on Vercel's infrastructure. The SDK is free; the hosting is where costs appear. If you're self-hosting or using a different cloud, the SDK still works, but you lose the seamless deployment experience that makes it appealing.

FAQ

Frequently Asked Questions

We offer end-to-end digital solutions including website design & development, UI/UX design, SEO, custom ERP systems, graphics & brand identity, and digital marketing.

Timelines vary by project scope. A standard website typically takes 3-6 weeks, while complex ERP or web application projects may take 2-5 months.

Yes - we offer ongoing support and maintenance packages for all projects. Our team is available to handle updates, bug fixes, performance monitoring, and feature additions.

Absolutely. Visit our Works section to browse our portfolio of completed projects across various industries and service categories.

Simply reach out via our contact form or call us directly. We will schedule a free consultation to understand your needs and provide a tailored proposal.